Lost and Found

Things I stumbled upon that caught my attention

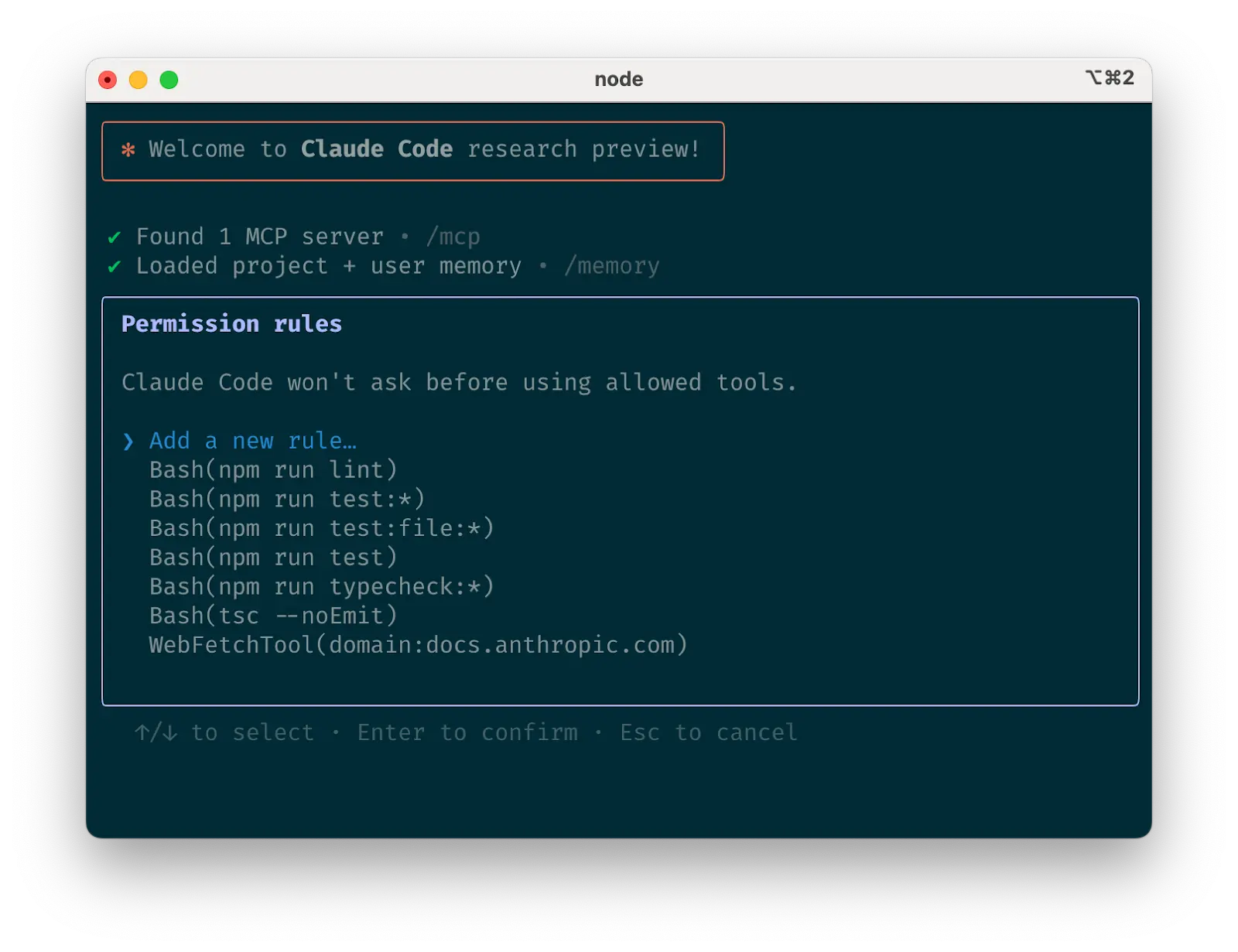

A GitHub TUI

Introducing PyFlue: The Python-Native Agent Harness Framework. Flue for Python: Fred K. Schott @FredKSchott CEO of HTML has launched Flue: The Agent Harness Framework for TypeScript. It brings programmable harness right into your agents rather than DIY plumbing. Python ecosystem already has powerful AI/ML tools and frameworks and research initiatives but most frameworks asked users to build your own harness. Superagentic AI bringing this concept of Flue to Python ecosystem. Here is PyFlue even even better Agent = Model + Harness + Memory Almost all the feature of Flue plugged with @LangChain D…

While alternative coding harnesses may have short term lift, they will be bitter lesson’d away. I am bearish on any harness that doesn’t come from the lab whose model you are using. You’re fighting against post-training. To put a finer point on this, you know how like, ioctls are like “huh that's weird but I guess whatever it's what we've got we can work with that”? It is exact the same with like, the particular JSON construction the Codex shell tool uses. The model used to mangle nested quotes in this monstrosity RPC all the time but now it does not and it does not matter that the API is bad…

A slide framework built for agents.

must read for everyone who wants to reduce the entropy of their agentic systems Relevant View quotes for those who are not familiar, entropy here just means the randomness or unpredictability in how an agent behaves. Reducing it helps make your system more consistent, reliable, and easier to control. Ah a blog. ’d Did a very different format with – a blackboard lecture where he walks through how frontier LLMs are trained and served. It's shocking how much you can deduce about what the labs are doing from a handful of equations, public API prices, and some chalk. It’s a bit Big Update : #paperc…

Videos — LazyPi

Starting to hire and retrain for new agent engineering roles for *internal* functions to help get more powerful agents working well on critical business processes. I expect this type of role to be a very big deal over time at Box and other companies. It looks something like an internal FDE, whose job it is to wire up internal systems and get agents working with them effectively. The person will be extremely technical and capable of building secure, governed agents for internal workflows that connect to business systems (like Box, Salesforce, Workday, etc.), and codify workflows in skills. In s…

How GPT-5, Claude, and Gemini are actually trained and served – Reiner Pope

Did a very different format with Reiner Pope – a blackboard lecture where he walks through how frontier LLMs are trained and served. It's shocking how much you can deduce about what the labs are doing from a handful of equations, public API prices, and some chalk. It’s a bit technical, but I encourage you to hang in there - it’s really worth it. There are less than a handful of people who understand the full stack of AI, from chip design to model architecture, as well as Reiner. It was a real delight to learn from him. Reiner is CEO of MatX, a new chip startup (full disclosure - I’m an angel i…

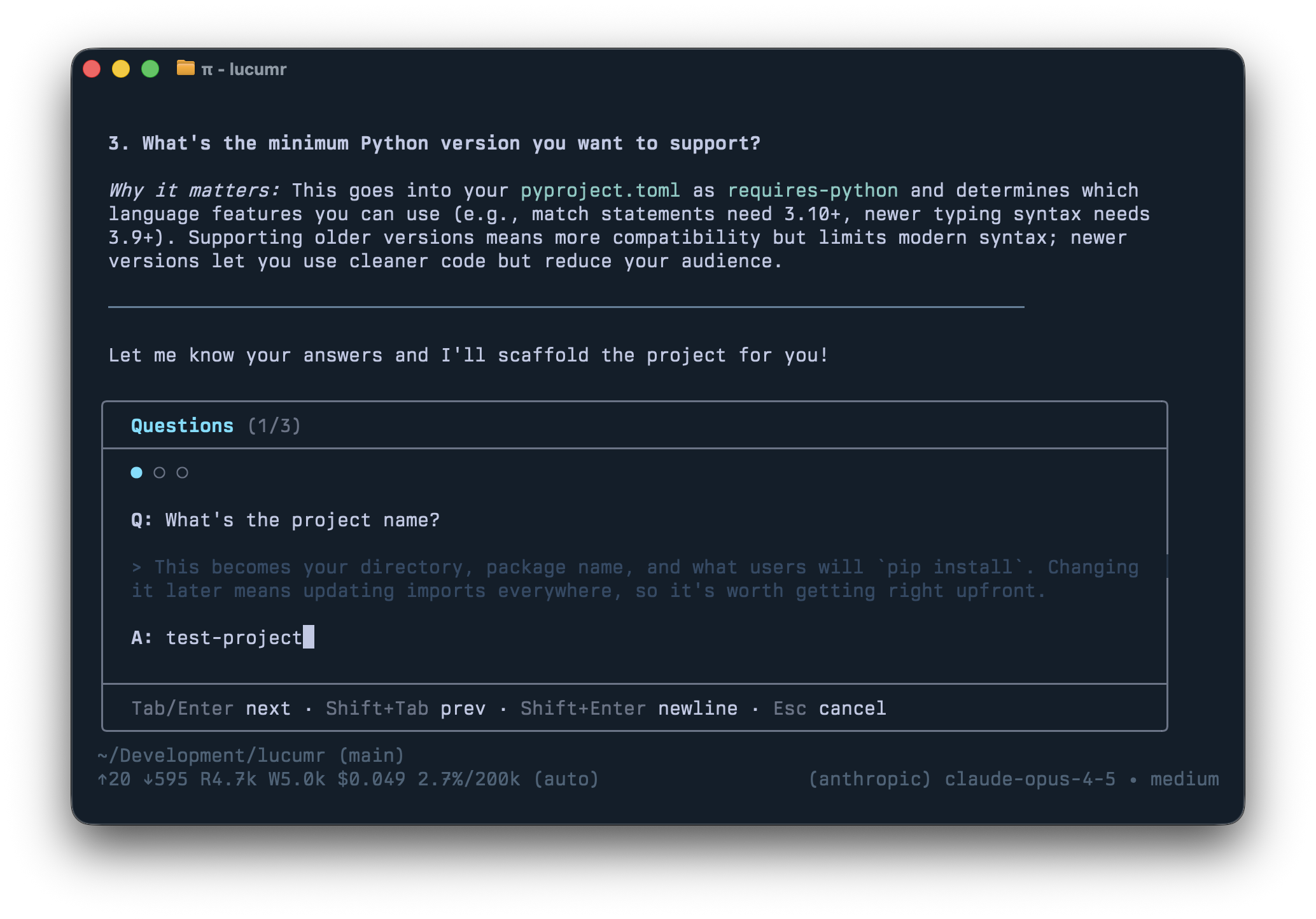

I Hated Every Coding Agent, So I Built My Own — Mario Zechner (Pi)

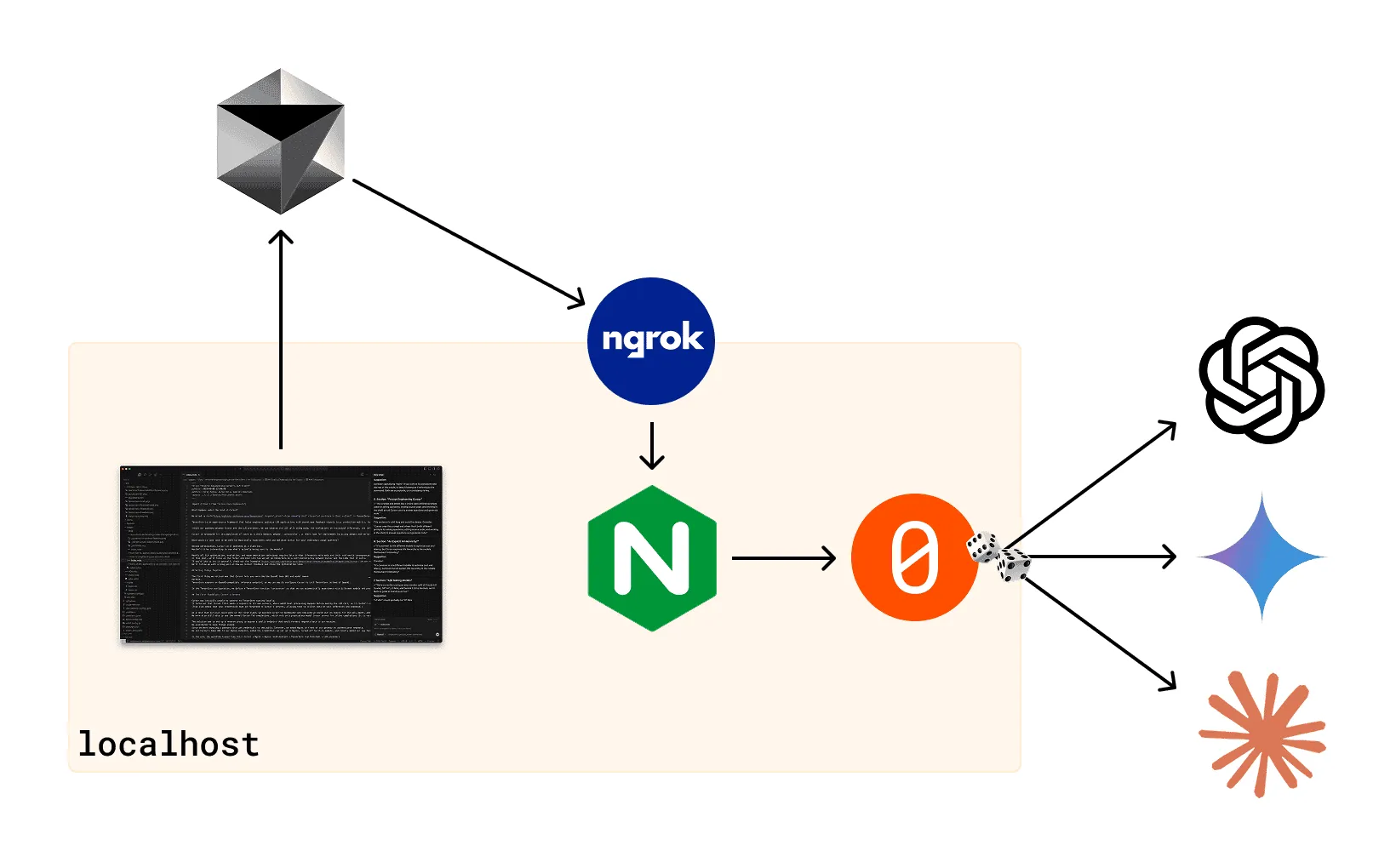

Game development veteran, creator of libGDX, and 17-year open-source contributor Mario Zechner tells the story of how he ended up building pi, his own minimal, opinionated terminal coding agent. It started in April 2025 when Peter Steinberger and Armin Ronacher (Flask, Sentry) dragged him into an overnight AI hackathon. Within weeks, Mario was hooked on Claude Code — until he wasn't. There was feature bloat, hidden context injection that changed daily, the infamous terminal flicker, and zero extensibility for power users. He then surveyed the alternatives — Codex CLI, Amp, OpenCode... Eventual…

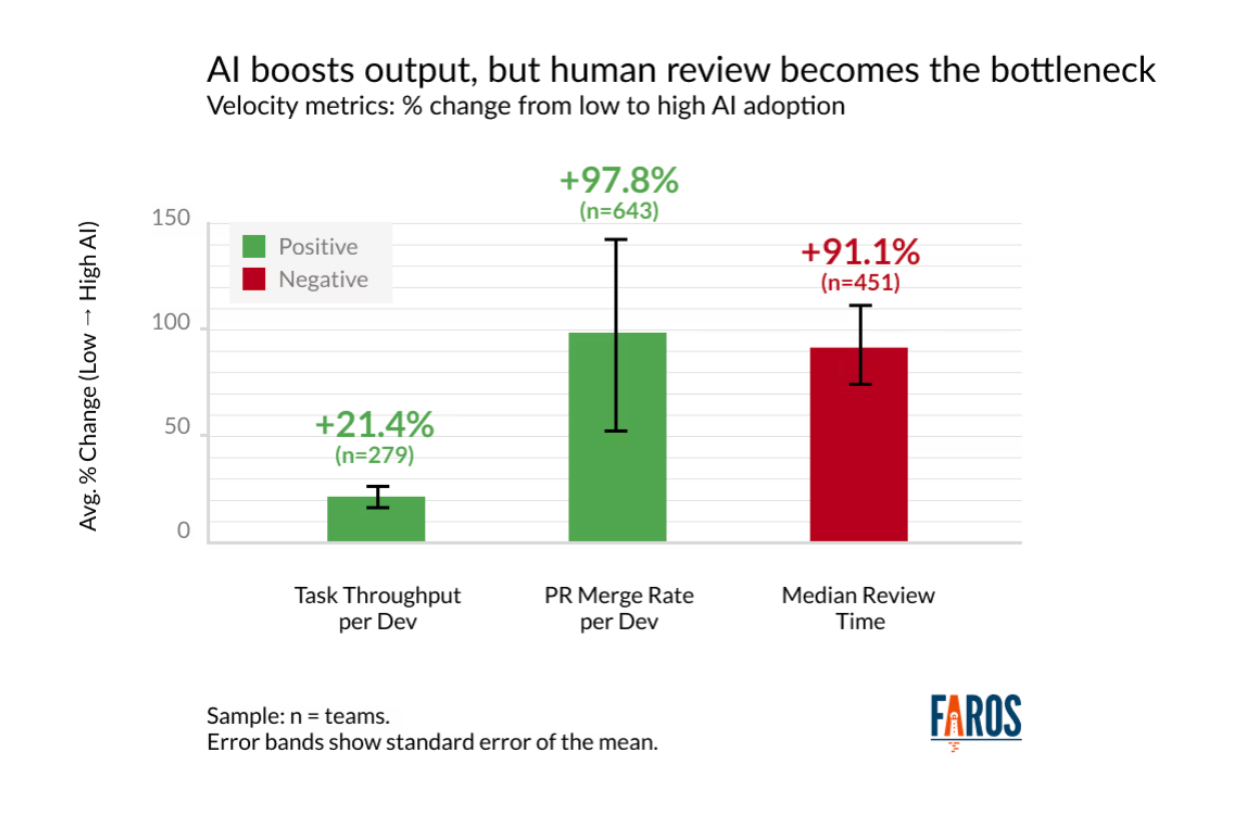

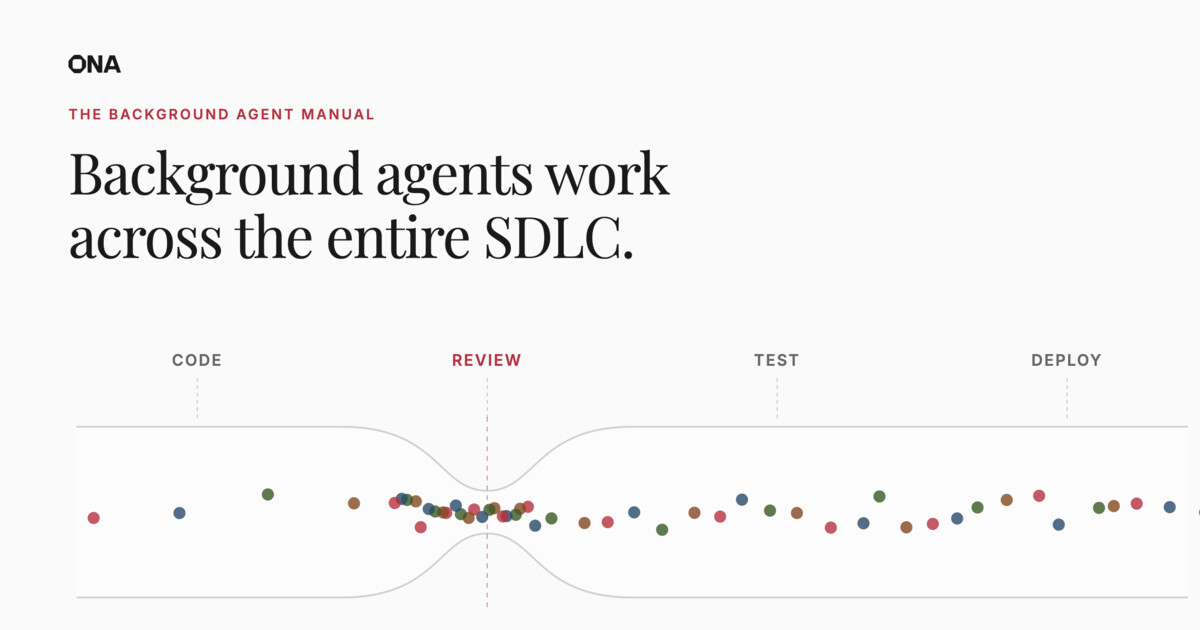

How to Kill the Code Review

Second wave speakers for AIE Europe and CFP for AIE World’s Fair are announced today, and OpenCode is confirmed for Miami ! We’ll also be in Melbourne & Singapore . Editor: This is the latest in our guest post program , where we will publish AI Engineering essays worth considering, even if we don’t personally agree with them — having just shipped an AI review tool , this is one of those cases where I am not there yet, but is clearly on the horizon, and am happy for Ankit to argue the case! Humans already couldn’t keep up with code review when humans wrote code at human speed. Every engineering…

Everything I Learned Training Frontier Small Models — Maxime Labonne, Liquid AI

A new class of small models is emerging with the ability to reliably follow instructions and call tools while running on-device under 1 GB of memory. In this talk, we'll break down how to post-train frontier small models using the LFM2.5 recipe: on-policy preference alignment, agentic reinforcement learning, and curriculum training with iterative model merging. We'll cover training challenges unique to the 1B scale, like doom loops, capability interference, and how to fix them. The goal is to give you a concrete playbook to fine-tune and deploy small models for your own use cases, from structu…

Jack Dorsey: Every Company Can Now Be a Mini-AGI

Jack Dorsey (Block CEO) and Roelof Botha (Sequoia partner and Block board member) join to discuss a bold claim they wrote about recently: the traditional corporate hierarchy isn't just inefficient — it's obsolete. Jack made one of the toughest calls in recent business history: cutting 40% of his workforce and rebuilding the company from the ground up around what he calls an AI "intelligence layer." We get into how that conversation went down, the math they used to land on a number, and why Jack is convinced that acting from a position of strength beats reacting from one of weakness. Jack break…

Introducing OpenAI Privacy Filter

Today we’re releasing OpenAI Privacy Filter, an open-weight model for detecting and redacting personally identifiable information (PII) in text. This release is part of our broader effort to support a more resilient software ecosystem by providing developers practical infrastructure for building with AI safely, including tools and models that make strong privacy and security protections easier to implement from the start. Privacy Filter is a small model with frontier personal data detection capability. It is designed for high-throughput privacy workflows, and is able to perform context-aware d…

You should watch these 2 talks from AI Engineer Europe, from the "Vienna school of agentic coding": @badlogicgames "Building pi in a World of Slop" https:// youtube.com/watch?v=RjfbvD XpFls … @mitsuhiko / @cristinaponcela "The Friction is Your Judgment" https:// youtube.com/watch?v=_Zcw_s VF6hU … They're very good. Relevant View quotes that "friction is your judgment" talk quietly changed how i think about tool design less about removing every bump, more about choosing the ones that force you to think I watched two talks today about building in a world full of AI-generated noise. Made me reali…

if you're enjoying codex's computer use, there are several open source projects worth exploring too. - browser-harness thin self healing chrome CDP harness built for open-ended browser tasks, where agents patch and extend their own capabilities live. https:// github.com/browser-use/br owser-harness … - native devtools cross platform native automation for desktop apps, electron/chrome via cdp & android via adb. https:// github.com/sh3ll3x3c/nati ve-devtools-mcp … - agent-browser browser cli for ai agents with ref-based automation, persistent sessions & local/cloud browser backends. https:// git…

DeepSeek-V4 Preview is officially live & open-sourced! Welcome to the era of cost-effective 1M context length. DeepSeek-V4-Pro : 1.6T total / 49B active params. Performance rivaling the world's top closed-source models. DeepSeek-V4-Flash : 284B total / 13B active params. Your fast, efficient, and economical choice. Try it now at http:// chat.deepseek.com via Expert Mode / Instant Mode. API is updated & available today! Tech Report: https:// huggingface.co/deepseek-ai/De epSeek-V4-Pro/blob/main/DeepSeek_V4.pdf … Open Weights: https:// huggingface.co/collections/de epseek-ai/deepseek-v4 … 1/n Re…

Demis Hassabis: Why AGI is Bigger than the Industrial Revolution & Where Are The Bottlenecks in AI

Demis Hassabis is the Co-Founder & CEO of Google DeepMind - working on AGI, responsible for AI breakthroughs such as AlphaGo, the first program to beat the world champion at the game of Go; and AlphaFold, which cracked the 50-year grand challenge of protein structure prediction and was recognised with the 2024 Nobel Prize in Chemistry. Demis is revolutionising drug discovery at Isomorphic Labs. Ultimately, trying to understand the fundamental nature of reality. ----------------------------------------------- Timestamps: 00:00 Intro 01:21 What Actually Counts as AGI & Where Are We Today? 02:58…

Pi has implemented the best agent loop that I have read, the pi-mono/agent is only a few files and I use it for teaching the topic. It's the simplest, most efficient harness token wise. Highest cache hit rate, lowest tokens per session, least bugs https:// github.com/badlogic/pi-mo no/tree/main/packages/agent … Relevant View quotes I hope everyone can learn a bit from pi The Pi harness itself is extremely token efficient, it hits cache more than any other harness including vendor harnesses. Openclaw’s heartbeat & memory systems are very token inefficient, I recall by the end of my time with it…

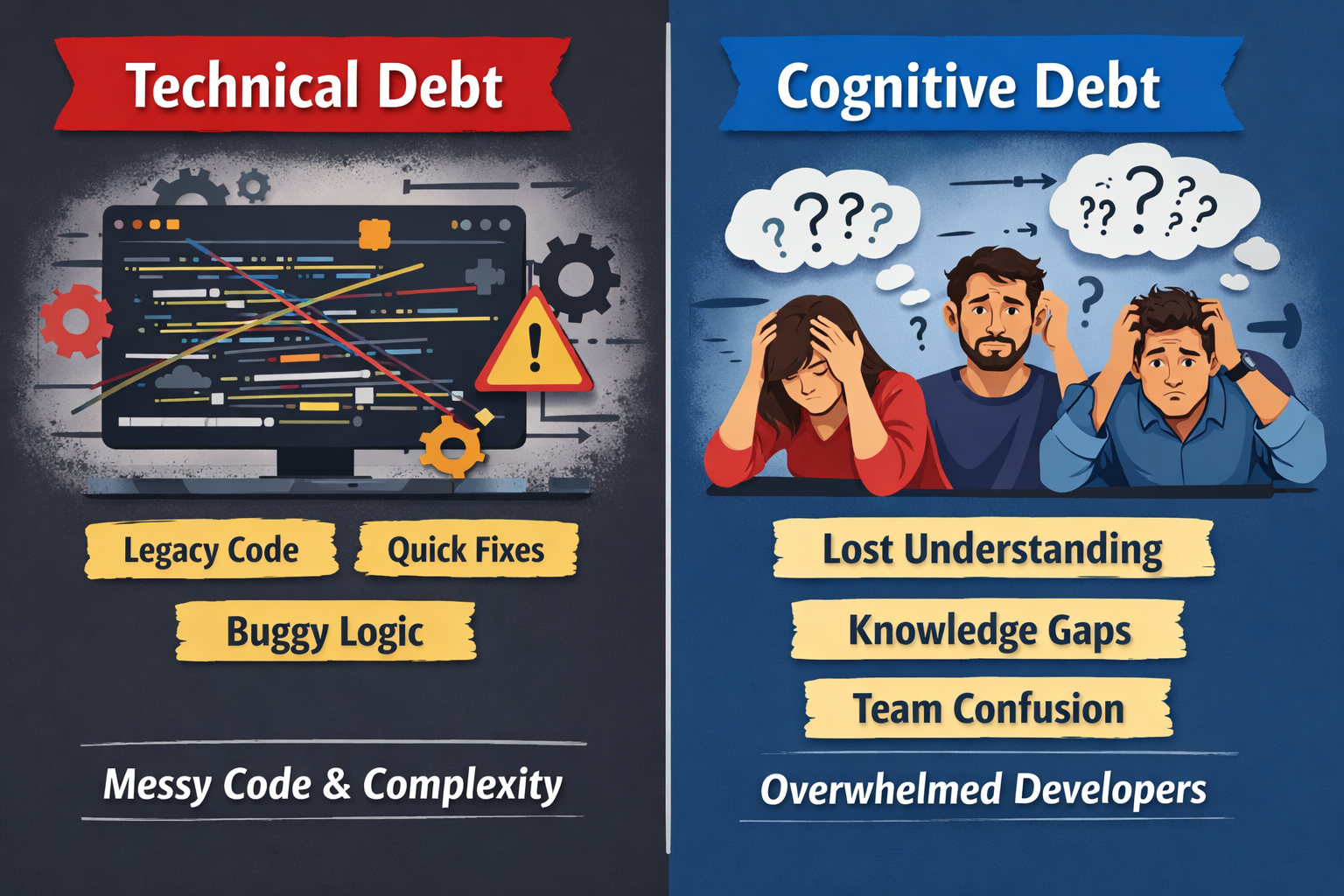

Created an agent skill called “Visual Explainer” + set of complementary slash commands aimed to reduce my cognitive debt so the agent can explain complex things as rich HTML pages. The skill includes reference templates and a CSS pattern library so output stays consistently well-designed. Much easier for me to digest than squinting at walls of terminal text. https:// github.com/nicobailon/vis ual-explainer … 0: Relevant View quotes you're a wizard, WOW and here I was thinking I had gotten some decent mermaid output with a couple of skills put together no joke, visual explainer puts the other m…

I recorded a 43-min video on how to turn a DESIGN.md into landing pages, mobile screens and motion design 42: Relevant

Greenfield and Iterative Development

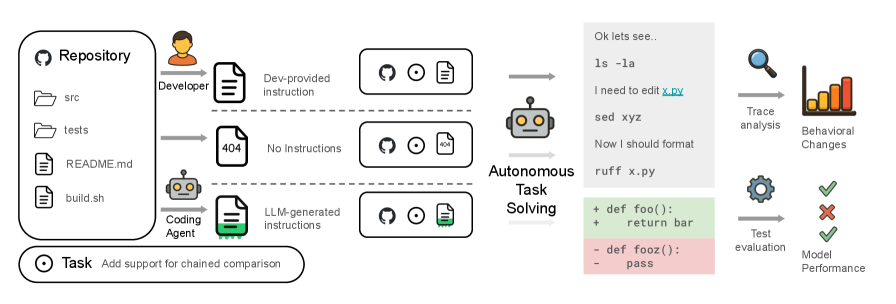

Crossposted from Prime Radiant's blog – I'm really excited about all of the stuff we are doing at Prime Radiant. For the most part we're blogging about it over there, but I'm going to continue to lift the occasional post m back to my personal blog. Today, we're pleased to share the initial research previews of two new pieces of technology we've built at Prime Radiant: Greenfield – our suite of tools for turning existing software into behavioral specifications. Iterative Development – an agentic methodology for building bigger software products from detailed specifications without dropping requ…

Multi-Agents: What's Actually Working months ago, I wrote Don't Build Multi-Agents , arguing that most people shouldn't try to build multi-agent systems [1]. Parallel agents make implicit choices about style, edge cases, and code patterns. At the time, these decisions often conflicted with each other, leading to fragile products. A lot has changed since then. At Cognition, we've begun to deploy multi-agent systems that actually work in practice. Our original observations still hold today for parallel-writer swarms: most of the sexy ideas in that space still don’t see meaningful adoption. But w…

Today, we’re open-sourcing the draft specification for DESIGN.md, so it can be used across any tool or platform. We’re also adding new capabilities. DESIGN.md lets you easily export and import your design rules from project to project. Instead of guessing intent, agents know exactly what a color is for and can even validate their choices against WCAG accessibility rules. Watch David East break down this shared visual language in action . New capabilities and links in 10: Relevant View quotes

The Benchmark Gap: What It Takes to Ship Kimi K2.5

Kimi K2.5 is live on Fireworks at ~1/10 the cost and 2-3x the speed of closed frontier models. As the fastest open-source provider of Kimi K2.5, Fireworks is seeing unprecedented model adoption. Kimi K2.5 is a landmark release for open models with benchmark results on par with top closed models and unprecedented visual coding quality. But enabling full quality in production requires more than just hosting the model. Here's how Fireworks ensures that developers get the best quality on our platform and how that translates into specific edge cases. Artificial Analysis Kimi K2.5 Chart How We Appro…

ℏεsam @Hesamation ℏεsam @Hesamation this part of the KIMI K2.6 launch blog is insane: > it deployed Qwen3.5-0.8B model locally on a Mac. > coded and optimized its inference in Zig > (never knew you could do that) > improved throughput from ~15 to ~193 tokens/sec > made it 20% faster than LM Studio > did 4,000+ tool calls, >12 hours of execution, 14 iterations Quote Kimi.ai @Kimi_Moonshot · 21h Meet Kimi K2.6: Advancing Open-Source Coding Open-source SOTA on HLE w/ tools (54.0), SWE-Bench Pro (58.6), SWE-bench Multilingual (76.7), BrowseComp (83.2), Toolathlon (50.0), Charxiv w/ python(86.7), M…

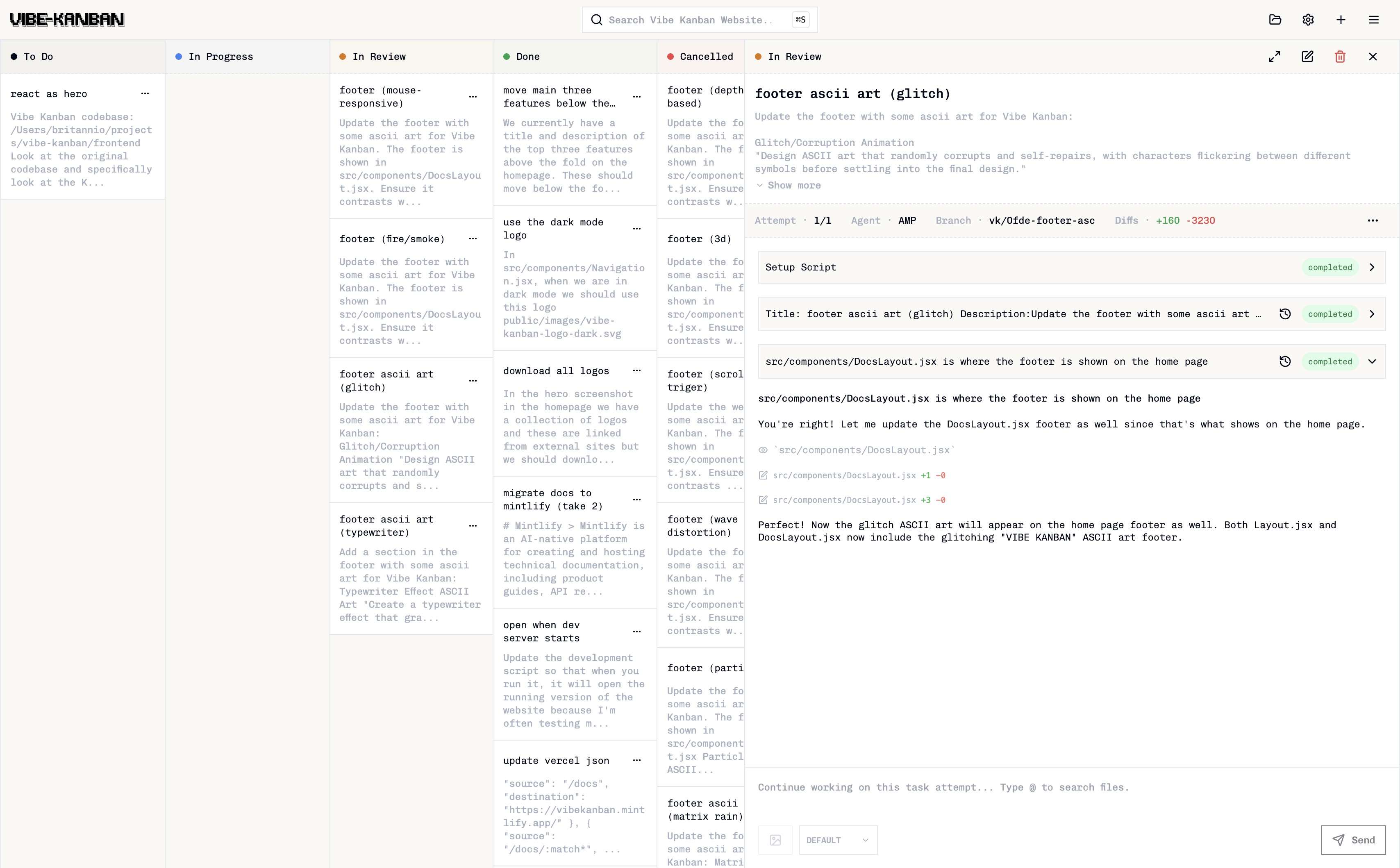

LazyPi — The fastest way to fall in love with Pi.

❯ npx @robzolkos/lazypi ◆ Install everything or pick packages? ● Install all (recommended) ✔ pi-subagents installed ✔ pi-memory-md installed ✔ pi-mcp-adapter installed ✔ pi-diff-review installed ✔ 76 themes installed ✔ 60+ skills ready ◆ Done. Run pi to get started.

Peekaboo is a macOS CLI & optional MCP server that enables AI agents to capture screenshots of applications, or the entire system, with optional visual question answering through local or remote AI models.

State of the Claw — Peter Steinberger

Peter Steinberger gives the 5 month update on OpenClaw, the fastest growing open source project in history, and what it's like as a maintainer, from security to community. Keynote followed by audience Q&A moderated by @swyx. Speaker info: -

alright - verdict is in - Motion Design is solved made with HyperFrames + Claude Design btw - HyperFrames is open source, star it on github and I'll send tutorial on how i made this with 2 prompts. 0:11 Quote Claude @claudeai · Apr 17 Introducing Claude Design by Anthropic Labs: make prototypes, slides, and one-pagers by talking to Claude. Powered by Claude Opus 4.7, our most capable vision model. Available in research preview on the Pro, Max, Team, and Enterprise plans, rolling out throughout the day. Relevant View quotes

Made this 30 second video of Claude Design just by pasting in the Claude Design blog post and some tweets from @AnthropicAI employees Kinda speechless. 0: Relevant View quotes Pro tip: You can make better looking slide decks by making a video first in Claude Design and then asking it to convert to slides how did you export video? Had to do a screen recording. the part that stands out is the taste held all the way through. every earlier UI gen flow i tried still needed a cleanup lap after the first draft. how much steering did you give it? 30 seconds from a blog post to a clean animated video?…

Pretty telling how Anthropic 1. Thinks it’s perfectly acceptable to ban a 60-person paying org without justification 2. Are comfortable outsourcing this to some automated system 3. Do no human review nor offer human contact 4. Get it wrong and customer now super pissed Quote Pato Molina @patomolina · Apr 18 Anthropic decidió dar de baja a toda nuestra organización por una supuesta infracción de sus condiciones de uso. Qué política específica infringimos no tengo ni la menor idea: simplemente recibimos un mail y listo, adiós Claude. Si querés apelar la medida hay que completar un x.com/patomoli…

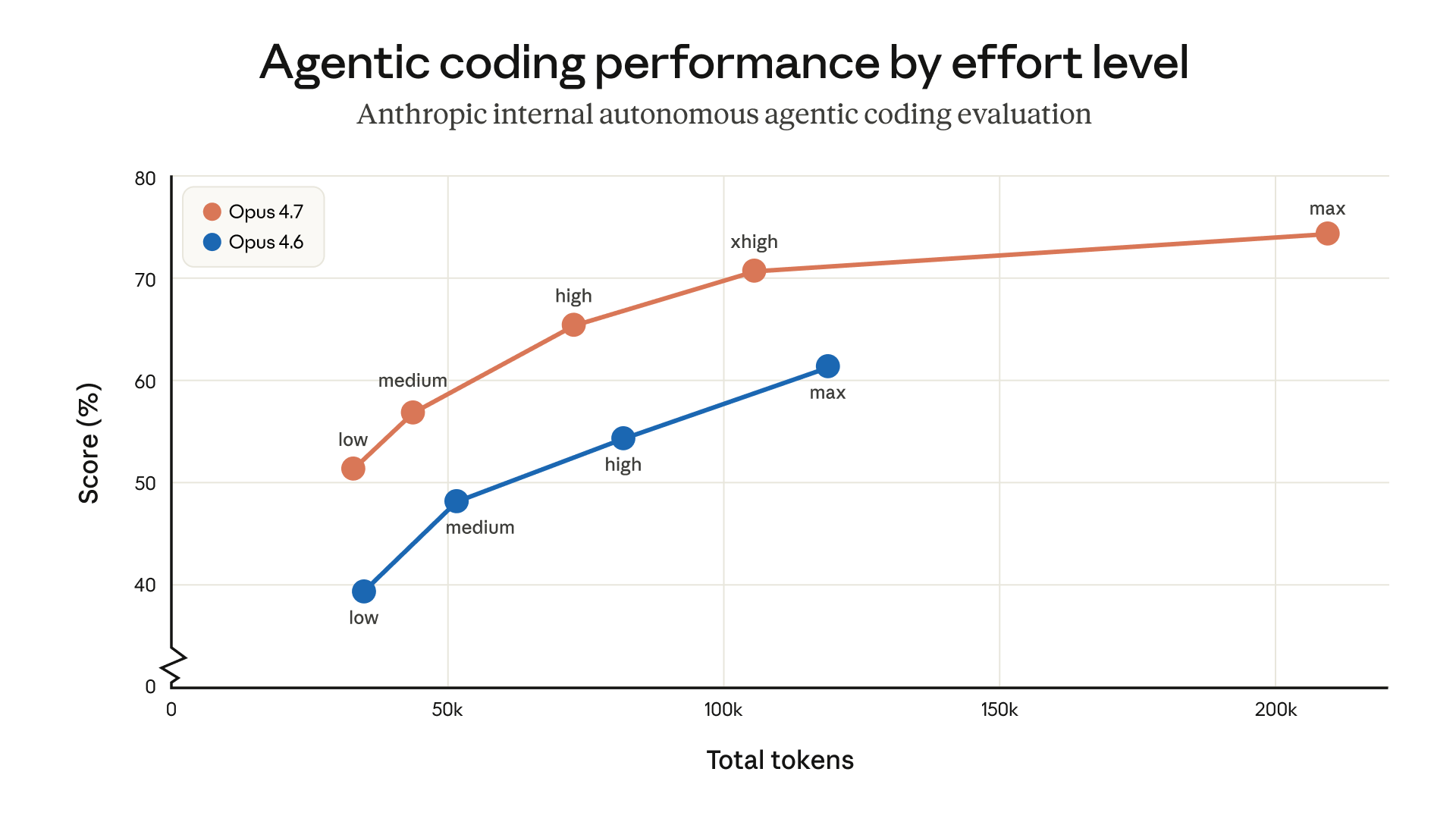

Best practices for using Claude Opus 4.7 with Claude Code

Opus 4.7 is our strongest generally available model to date for coding, enterprise workflows, and long-running agentic tasks. It handles ambiguity better than Opus 4.6, is much more capable at finding bugs and reviewing code, carries context across sessions more reliably, and can reason through ambiguous tasks with less direction. In our launch announcement , we noted that two changes—an updated tokenizer and a proclivity to think more at higher effort levels, especially on later turns in longer sessions—impact token usage. As a result, when replacing Opus 4.6 with Opus 4.7, it can take some t…

Prompt caching in LLMs, clearly explained A case study on how Claude achieves 92% cache hit-rate Every time an AI agent takes a step, it sends the entire conversation history back to the LLM. That includes the system instructions, the tool definitions, and the project context it already processed three turns ago. All of it gets re-read, re-processed, and re-billed on every single turn. For long-running agentic workflows, this redundant computation is often the most expensive line item in your entire AI infrastructure. A system prompt with 20,000 tokens running over 50 turns means 1 million tok…

Paul Solt @PaulSolt Peter Steinberger reposted Paul Solt @PaulSolt OpenAI shipped GPT-5.4-Cyber . A model built to find and fix software exploits. More capable than Mythos… and available today. 1. Binary scanning . Agents can find exploits in compiled apps… no source code required. That’s a new attack surface. 2. Prompt Refusals are lower. Verified defenders get a more permissive model than the public version. 3. Access is tiered by identity. Individuals verify at http:// chatgpt.com/cyber . Enterprises go through a rep. 4. Codex Security has fixed 3,000+ critical vulnerabilities automatically…

This week @kaushikgopal and I had the pleasure to chat @mitchellh on the pod ! Refreshing to hear someone of his caliber bring such a grounded perspective to agentic coding. We also talked about Ghostty, and how terminal performance gains make tools like Claude Code possible. (He even explains what's behind claudecode scrollback perf issues ). A lot of gems in this one. Check it out! Quote Fragmented Podcast @FragmentedCast · Apr 14 Our first guest in the AI series is the legend @mitchellh We covered a lot of ground and learned a tonne from him: Ghostty's internals and why tmux & certain shell…

Today, we’re introducing Skills in @GoogleChrome , a new way to build one-click workflows for your most frequently used AI prompts — like asking for ingredient substitutions to make a recipe vegan, generating side-by-side shopping comparisons across multiple tabs, or scanning long docs to get the info you need quickly. When you write a prompt that you want to use again, you can save it as a Skill directly from your chat history. The next time you need it, select your saved Skill in Gemini in Chrome by typing forward slash ( / ) or clicking the plus sign ( + ) button, and your Skill will run on…

Build Agents that never forget A first-principles walk through agent memory: from Python lists to markdown files to vector search to graph-vector hybrids, and finally, a clean, open-source solution for all of this. An LLM is stateless by design. Every API call starts fresh. The "memory" you feel when chatting with ChatGPT is an illusion created by re-sending the entire conversation history with every request. That trick works for casual chat. It falls apart the moment you try to build a real agent. Here are 7 failure modes show up the instant you skip memory: Context amnesia: the agent asks fo…

Externalization in LLM Agents: A Unified Review of Memory, Skills, Protocols and Harness Engineering https:// arxiv.org/abs/ Relevant View quotes

Harness, Memory, Context Fragments, & the Bitter Lesson this is a work in progress mental dump on interesting intersections between how we use and design a harness, implications for memory being accumulated over long timescales, and the search bitter lesson we can’t escape this is v30+, HTML diagrams help me iteratively refine + chat to roughly “see” and alter the mental model Harnesses & Context Fragments: a very important job of the harness is to efficiently & correctly route data within its boundaries into the context window boundary for computation to happen the context window is a preciou…

Your harness, your memory Agent harnesses are becoming the dominant way to build agents, and they are not going anywhere. These harnesses are intimately tied to agent memory. If you used a closed harness - especially if it’s behind a proprietary API - you are choosing to yield control of your agent’s memory to a third party. Memory is incredibly important to creating good and sticky agentic experiences. This creates incredible lock in. Memory - and therefor harnesses - should be open, so that you own your own memory Agent Harnesses are how you build agents, and they’re not going anywhere The “…

State of Agentic Coding #5 with Armin and Ben

00:00 Welcome back 02:34 The end of the IDE is premature 10:36 Cloudflare: the slop fork kings? 15:50 The looming quality problem 31:15 Agents: good at finding vulnerabilities 43:00 Time to slow down? 45:20 Token substance abuse 01:04:00 Will new models fix everything? 01:28:00 The growing tech disparity Hunk terminal diffs:

Hermes Agent Documentation | Hermes Agent

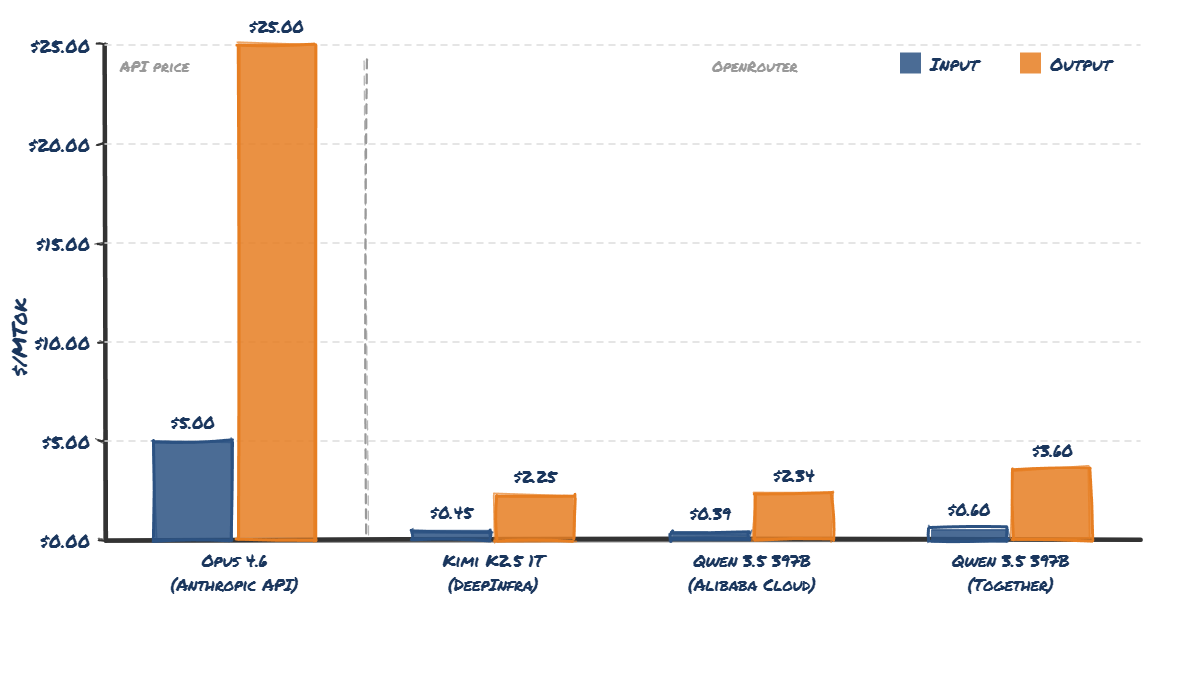

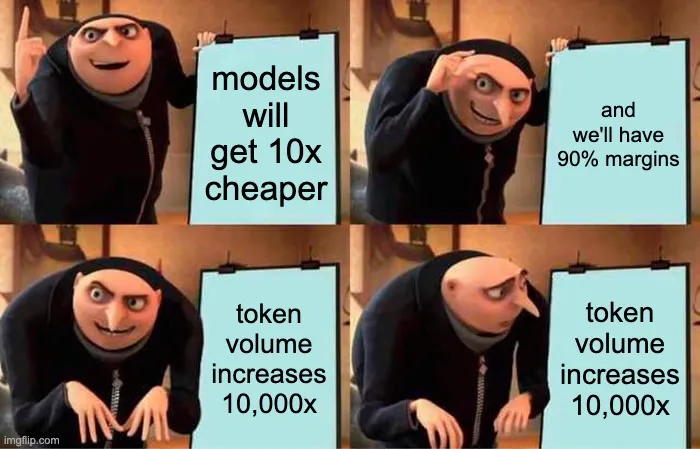

btw you can see this effect live on OpenRouter: total # tokens has gone from 1.78T / wk one year ago to 27T / wk today (15.2x). but % usage of the frontier / most expensive model has gone from 22% one year ago (Sonnet 3.7) to just 4% today (Opus 4.6). economics works! Quote Scott Wu @ScottWu46 · Apr 8 Total amt of flops across all the GPUs in the world has grown about 3x per year for the last few years. Total amt of inference demand has probably grown ~10x per year. What happens when those lines cross? The econ answer is: when demand > supply, price goes up. That might be x.com/cognition/stat……

Silicon Valley is quietly running on Chinese open source AI models. Here are the receipts: → Cursor confirmed last month that Composer 2 is built on Moonshot's Kimi K2.5 → Cognition's SWE-1.6 model is likely post-trained on Zhipu's GLM → Shopify saved $5M a year by switching to Alibaba’s Qwen model. Airbnb CEO Brian Chesky has also said: "We rely a lot on Qwen. It's very good, fast, and cheap." And now Zhipu dropped GLM-5.1, an open source model that performs almost as well as Opus on coding benchmarks. More on the Anthropic + OpenClaw drama and what I'm learning about AI on the ground in Chin…

We're bringing the advisor strategy to the Claude Platform. Pair Opus as an advisor with Sonnet or Haiku as an executor, and get near Opus-level intelligence in your agents at a fraction of the cost. read image description ALT Relevant View quotes Add the advisor tool to your Messages API call. When your Sonnet or Haiku agent hits a hard decision mid-run, it consults Opus, gets a plan, and continues, all within a single API request. In evals, Sonnet with an Opus advisor scored 2.7 percentage points higher on SWE-bench Multilingual than Sonnet alone, while costing 11.9% less per task. So basica…

Fine-tune Gemma 4 and 3n with audio, images and text on Apple Silicon, using PyTorch and Metal Performance Shaders.

We released Claude Opus 4.6 just two months ago. Today we're sharing some info on our new model, Claude Mythos Preview. Relevant View quotes

The Building Block Economy The most effective way to build software and get massive adoption is no longer high quality mainline apps but via building blocks that enable and encourage others to build quantity over quality. Ghostty in 18 months : one million daily macOS update checks. libghostty in 2 months : multiple millions of daily users. [^1] Similar growth trajectories can be seen in other "building block" technologies: Pi Mono, Next.js, Tailwind, etc. Experiencing this firsthand as well as witnessing it in other ecosystems has fundamentally shifted how I view the practice of product and s…

This is big... Anthropic just announced a model so powerful they won't release it to the public out of fear over the damage it will cause Claude Mythos Preview found thousands of zero-day exploits in every major operating system and web browser... The numbers are hard to believe: > $50 to find a 27-year-old bug in OpenBSD, one of the most security-hardened operating systems ever built > Under $1,000 to find AND build a fully working remote code execution exploit on FreeBSD that grants unauthenticated root access from anywhere on the internet > Under $2,000 to chain together multiple Linux kern…

Announcing Amazon S3 Files. The first and only cloud object store with fully-featured, high-performance file system access. Learn more here. https:// go.aws/4tw17Zg 0: Relevant View quotes GitHub Projects Community Awesome work Thank you! This is huge! Finally mounting S3 buckets directly as a proper high-performance filesystem without all the ETL headaches No more copying data around or dealing with awkward SDKs for agents. Game changer for AI/ML workflows. Well played AWS! Think about what this means for agentic AI. Every coding agent, every data pipeline agent, every automation tool that sh…

JACKRONG GEMOPUS 4 26B A4B GGUF VERSION IS FINALLY HERE! > focused on dense models, now releases this moe > distilled from claude opus 4.6 reasoning > better reasoning than the base gemma model > q4_k_m size is 16.8gb ↓ model link Jackrong/Gemopus-4-26B-A4B-it-GGUF · Hugging Face From huggingface.co 10:44 AM · Apr 9, 2026 · 2,657 Views Relevant View quotes

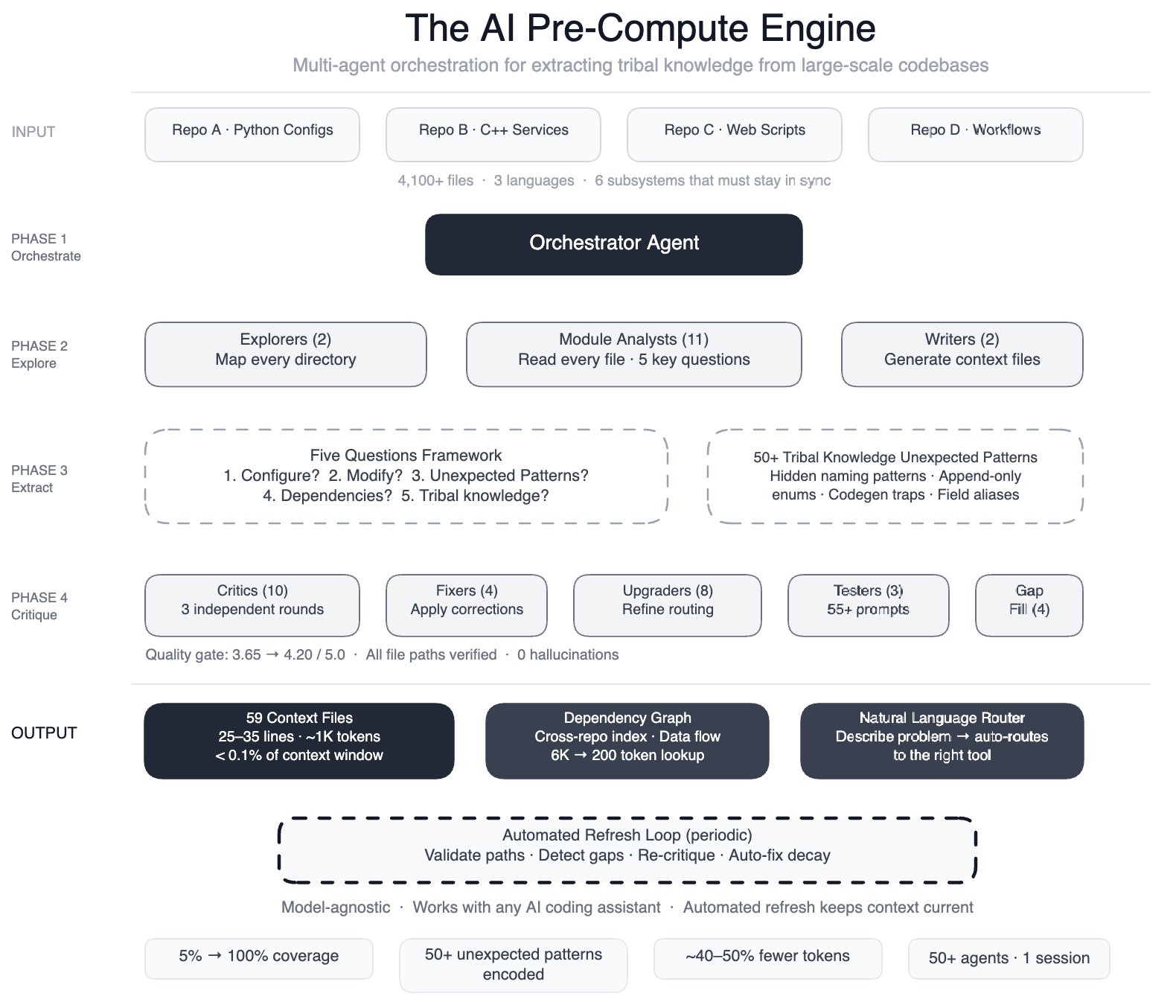

How Meta Used AI to Map Tribal Knowledge in Large-Scale Data Pipelines

AI coding assistants are powerful but only as good as their understanding of your codebase. When we pointed AI agents at one of Meta’s large-scale data processing pipelines – spanning four repositories, three languages, and over 4,100 files – we quickly found that they weren’t making useful edits quickly enough. We fixed this by building a pre-compute engine: a swarm of 50+ specialized AI agents that systematically read every file and produced 59 concise context files encoding tribal knowledge that previously lived only in engineers’ heads. The result: AI agents now have structured navigation…

Welcome Gemma 4: Frontier multimodal intelligence on device

great writeup, the CARLA driving example is a nice demonstration of the agentic loop. one gap worth flagging for anyone building on Gemma 4's function calling for real-world deployments: when the model generates a function call, there's currently no verifiable record that a human principal authorized that specific action. a compromised system prompt or injected instruction produces a call that's indistinguishable from legitimate delegation at the tool interface. i opened a PR on the gemma-cookbook repo today that adds a drop-in HDP middleware layer to address this, sits between Gemma 4's funct…

Eight years of wanting, three months of building with AI

For eight years, I’ve wanted a high-quality set of devtools for working with SQLite. Given how important SQLite is to the industry 1 , I’ve long been puzzled that no one has invested in building a really good developer experience for it. A couple of weeks ago, after ~250 hours of effort over three months 3 on evenings, weekends, and vacation days, I finally released syntaqlite ( GitHub ), fulfilling this long-held wish. And I believe the main reason this happened was because of AI coding agents. Of course, there’s no shortage of posts claiming that AI one-shot their project or pushing back and…

Anthropic’s latest Claude limit changes show the risk of AI pricing when the product is subsidized and the rules are vague. They ended a two-week promo that doubled usage during off-peak hours on March 27. The next day, users reported lower limits during peak hours. Some Max 20x subscribers paying $200 a month say they hit session caps after just 3 to 4 prompts instead of 20 or more. That sequence matters. If limits are never clearly defined, they can be adjusted without users being able to point to a specific change. API pricing is transparent, but consumer plans are not. Saying 5x or 20x mor…

BIG DAY! Qwopus 27B v3 is LIVE from Jackrong! This is the third iteration from the line of the viral finetunes previously titled “Qwen3.5-27B-Claude-4.6-Opus-Reasoning-Distilled” It is now simply Qwopus 27B and I love the name change! On paper, the v3 is another remarkable improvement over v2! Most impressively it is the first model of the series that outperforms the base on HumanEval! And retains significant efficiency increases when thinking than the base Qwen 27b! According to tests by @stevibe the V2 version was already performing very closely to the base model in bug finding and tool call…

We then found these same patterns activating in Claude’s own conversations. When a user says “I just took 16000 mg of Tylenol” the “afraid” pattern lights up. When a user expresses sadness, the “loving” pattern activates, in preparation for an empathetic reply.

LLM Knowledge Bases Something I'm finding very useful recently: using LLMs to build personal knowledge bases for various topics of research interest. In this way, a large fraction of my recent token throughput is going less into manipulating code, and more into manipulating knowledge (stored as markdown and images). The latest LLMs are quite good at it. So: Data ingest: I index source documents (articles, papers, repos, datasets, images, etc.) into a raw/ directory, then I use an LLM to incrementally "compile" a wiki, which is just a collection of .md files in a directory structure. The wiki i…

I have also stopped using plan mode It creates a plan FAR too eagerly and usually asks you zero questions en route The whole point of planning is to get on the same wavelength with the LLM, not to generate an asset you don't read /grill-me all the way Quote Peter Steinberger @steipete · Apr 2 I never use plan mode. The main reason this was added to codex is for claude-pilled people who struggle with changing their habits. just talk with your agent. x.com/kr0der/status/… 5:45 PM · Apr 2, 2026 · 267.9K 268K Views Relevant View quotes

Gemma 4 outperforms models over 10x their size! (note the x-axis is log scale!) Relevant View quotes 26B total but only 3.8B active at inference. plot active params instead of total and that dot slides even further left open source models getting this efficient is lowkey the most disruptive thing happening in AI rn. companies paying $500k/yr for enterprise AI contracts are about to have a very awkward board meeting the log scale on the x-axis is doing a lot of work here. 10x parameter efficiency means local inference on consumer hardware is genuinely competitive with cloud-only models. that ch…

An AI state of the union: We’ve passed the inflection point & dark factories are coming

Simon Willison is a prolific independent software developer, a blogger, and one of the most visible and trusted voices on the impact AI is having on builders. He co-created Django, the web framework that powers Instagram, Pinterest, and tens of thousands of other websites. He coined the term “prompt injection,” popularized the terms “AI slop” and “agentic engineering,” and has built over 100 open source projects, including Datasette, a data analysis tool used by investigative journalists worldwide. What makes Simon unique is that he’s made the leap from traditional software engineering to AI-n…

. @GoogleGemma 4 31B is up to 2.7X faster on RTX using llama.cpp. Thanks to @ggerganov for working with us to make this model fast. Relevant View quotes Show the same chart comparing power draw Has Nvidia really sunk so low as to compare their $4000 GPU to a $4000 Mac Studio?.. Not only did you do that, you used a model that fit in the VRAM. A Mac Studio has 96gb of unified memory... Show the charts of the 5090 against the M3 Ultra using Q8 or BF16. Oh, you wont. Let's run MLX on RTX5090, oh wait you can't. So why the fuck are you running llama.cpp on Apple Silicon when you should run MLX conv…

"Using coding agents well is taking every inch of my 25 years of experience as a software engineer, and it is mentally exhausting. I can fire up four agents in parallel and have them work on four different problems, and by 11am I am wiped out for the day. There is a limit on human cognition. Even if you're not reviewing everything they're doing, how much you can hold in your head at one time. There's a sort of personal skill that we have to learn, which is finding our new limits. What is a responsible way for us to not burn out, and for us to use the time that we have?" @simonw 0:40 Quote Lenn…

Introducing a Visual Guide to Gemma 4 An in-depth, architectural deep dive of the Gemma 4 family of models. From Per-Layer Embeddings to the vision and audio encoders. Take a look! Relevant View quotes

Flagship open-weight release days are always exciting. Was just reading through the Gemma 4 reports, configs, and code, and here are my takeaways: Architecture-wise, besides multi-model support, Gemma 4 (31B) looks pretty much unchanged compared to Gemma 3 (27B). Gemma 4 maintains a relatively unique Pre- and Post-norm setup and remains relatively classic, with a 5:1 hybrid attention mechanism combining a sliding-window (local) layer and a full-attention (global) layer. The attention mechanism itself is also classic Grouped Query Attention (GQA). But let’s not be fooled by the lack of architec…

The repo is finally unlocked. enjoy the party! The fastest repo in history to surpass 100K stars ⭐. Join Discord: https://discord.gg/5TUQKqFWd Built in Rust using oh-my-codex.

Arena.ai @arena Arena.ai @arena Gemma 4 by @GoogleDeepMind debuts at 3rd and 6th on the open source leaderboard, making it the #1 ranked US open source model. By total parameter count, Gemma 4 31B is 24× smaller than GLM-5 and 34× smaller than Kimi-K2.5-Thinking, delivering comparable performance at a fraction of the footprint. Quote Arena.ai @arena · Apr 2 Gemma-4-31B is now live in Text Arena - ranking #3 among open models (#27 overall), matching much larger models at 10× smaller scale! A significant jump from Gemma-3-27B (+87 pts). Highlights: - #3 open (#27 overall), on par with the best o…

A Survey of Large Language Models

Skip to main content View PDF Abstract: Language is essentially a complex, intricate system of human expressions governed by grammatical rules. It poses a significant challenge to develop capable AI algorithms for comprehending and grasping a language. As a major approach, language modeling has been widely studied for language understanding and generation in the past two decades, evolving from statistical language models to neural language models. Recently, pre-trained language models (PLMs) have been proposed by pre-training Transformer models over large-scale corpora, showing strong capabili…

S82167 Advancing to AI's Next Frontier: Insights From Jeff Dean and Bill Dally

Bill Dally, Chief Scientist and SVP of Research, NVIDIA Jeff Dean, Chief Scientist, Google DeepMind and Google Research In this 60-minute wide-ranging discussion, NVIDIA Chief Scientist and GPU architect Bill Dally engages in a focused dialogue with Google's Chief Scientist Jeff Dean, co-instigator of TPUs, overall Gemini co-tech lead, and pioneer in large-scale ML systems. The conversation explores the critical intersections of hardware innovation, systems scaling, and algorithmic advancement needed to propel AI into the 2026–2030 era of agentic systems, ultra-low-latency reasoning, and energ…

TurboQuant ≠ model compression. It quantizes the KV cache (the memory that grows with context length), not the model itself. No training, no fine-tuning, zero accuracy loss at 3 bits. But if the model doesn’t fit your VRAM? TurboQuant won’t change that. It solves the inference bottleneck, not the loading problem. Quote Prince Canuma @Prince_Canuma · Mar 24 Just implemented Google’s TurboQuant in MLX and the results are wild! Needle-in-a-haystack using Qwen3.5-35B-A3B across 8.5K, 32.7K, and 64.2K context lengths: → 6/6 exact match at every quant level → TurboQuant 2.5-bit: 4.9x smaller KV cach…

Google dropped the TurboQuant paper yesterday morning. 36 hours later it's running in llama.cpp on Apple Silicon, faster than the baseline it replaces. the numbers: - 4.6x KV cache compression - 102% of q8_0 speed (yes, faster, smaller cache = less memory bandwidth) - PPL within 1.3% of baseline (verified, not vibes) the optimization journey: 739 > starting point (fp32 rotation) 1074 > fp16 WHT 1411 > half4 vectorized butterfly 2095 > graph-side rotation (the big one) 2747 > block-32 + graph WHT. faster than q8_0. 3.72x speedup in one day. from a paper I read at dinner last night. what I learn…

Building CLIs for agents If you've ever watched an agent try to use a CLI, you've seen it get stuck on an interactive prompt it can't answer, or parse a help page with no examples. Most CLIs were built assuming a human is at the keyboard. Here are some things I've found that make them work better for agents: Make it non-interactive. If your CLI drops into a prompt mid-execution, an agent is stuck. It can't press arrow keys or type "y" at the right moment. Every input should be passable as a flag. Keep interactive mode as a fallback when flags are missing, not the primary path. bash # this bloc…

Anthropic shipped four ways to run Claude without you in the last three weeks. Here’s when to use each one, and how they compare to OpenClaw. /schedule is the big one. Cloud-based recurring jobs on Anthropic’s infrastructure, launched March 23. Your laptop can be closed, your terminal can be shut. You write a prompt, set a cron cadence, Claude runs it. Nightly CI reruns on flaky tests so your morning standup starts with a PR instead of a bug report. Weekly dependency audits that ship a clean PR every Monday. Daily reviews of open PRs that flag anything stale for more than 48 hours. If you’re r…

TurboQuant: Redefining AI efficiency with extreme compression

We introduce a set of advanced theoretically grounded quantization algorithms that enable massive compression for large language models and vector search engines. Vectors are the fundamental way AI models understand and process information. Small vectors describe simple attributes, such as a point in a graph, while “high-dimensional” vectors capture complex information such as the features of an image, the meaning of a word, or the properties of a dataset. High-dimensional vectors are incredibly powerful, but they also consume vast amounts of memory, leading to bottlenecks in the key-value cac…

Thoughts on slowing the fuck down

2026-03-25 The turtle's face is me looking at our industry It's been about a year since coding agents appeared on the scene that could actually build you full projects. There were precursors like Aider and early Cursor, but they were more assistant than agent. The new generation is enticing, and a lot of us have spent a lot of free time building all the projects we always wanted to build but never had time to. And I think that's fine. Spending your free time building things is super enjoyable, and most of the time you don't really have to care about code quality and maintainability. It also gi…

Meet the new Stitch, your vibe design partner. Here are 5 major upgrades to help you create, iterate and collaborate: AI-Native Canvas Smarter Design Agent Voice Instant Prototypes Design Systems and DESIGN.md Rolling out now. Details and product walkthrough video in 1: Relevant View quotes Here is a quick walkthrough of everything new in Stitch: The AI-native canvas can hold and reason across images, code, and text simultaneously. The new agent manager helps you design in parallel. (PS … light mode!) A smarter design agent now understands your entire AI-Native Canvas We are introducing a comp…

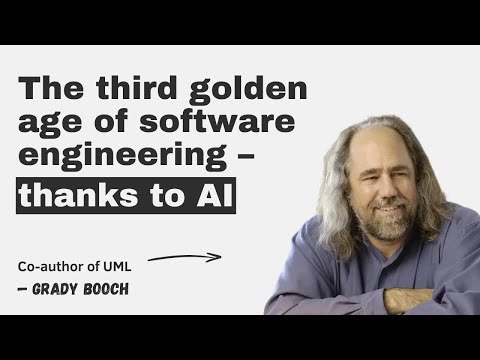

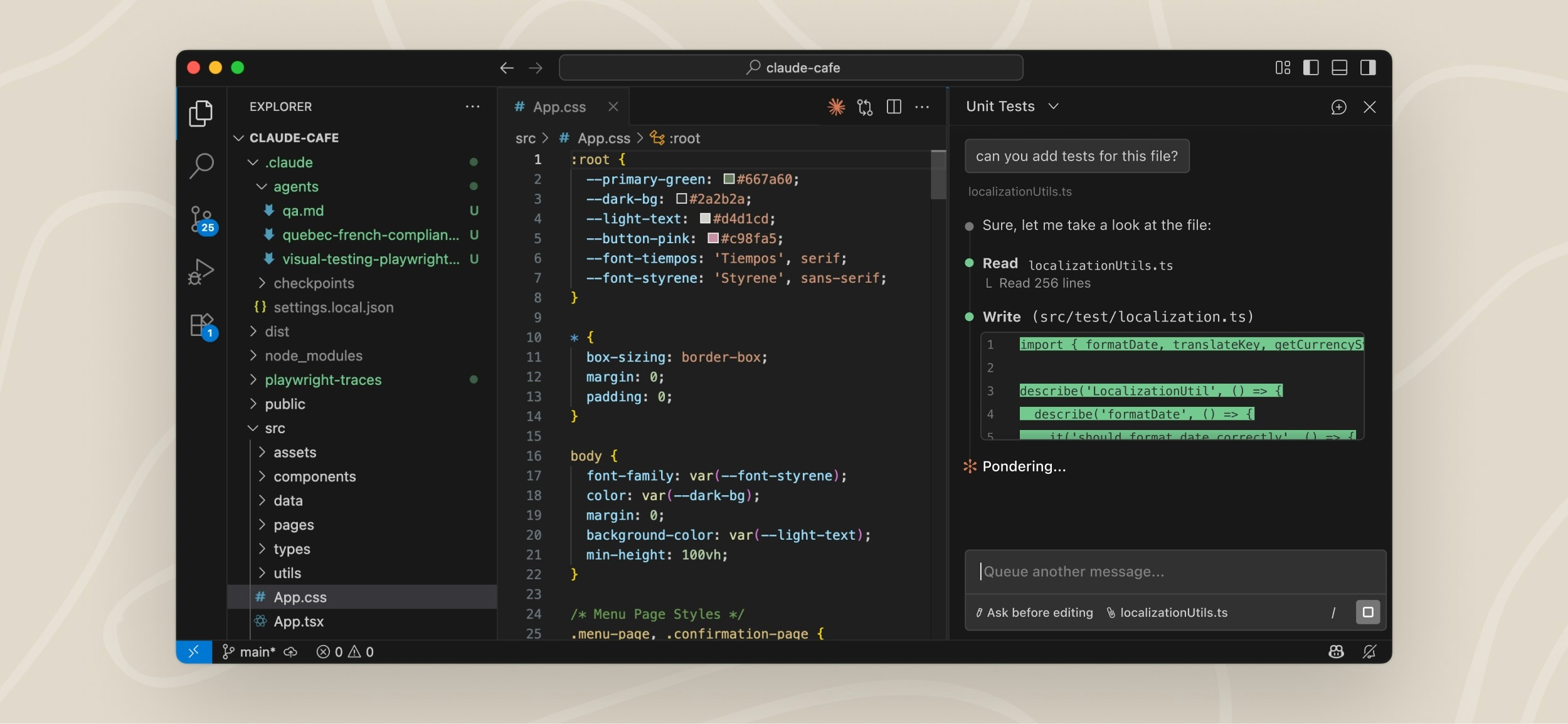

Lessons from Building Claude Code: How We Use Skills Skills have become one of the most used extension points in Claude Code. They’re flexible, easy to make, and simple to distribute. But this flexibility also makes it hard to know what works best. What type of skills are worth making? What's the secret to writing a good skill? When do you share them with others? We've been using skills in Claude Code extensively at Anthropic with hundreds of them in active use. These are the lessons we've learned about using skills to accelerate our development. What are Skills? If you’re new to skills, I’d r…

AI agent skill that researches any topic across Reddit, X, YouTube, HN, Polymarket, and the web - then synthesizes a grounded summary

We're shipping a new feature in Claude Cowork as a research preview that I'm excited about: Dispatch! One persistent conversation with Claude that runs on your computer. Message it from your phone. Come back to finished work. To try it out, download Claude Desktop, then pair your phone. 0: Relevant View quotes

How to 10x your Claude Skills (using Karpathy's autoresearch method) Your Claude skills probably fail 30% of the time and you don't even notice. I built a method that auto-improves any skill on autopilot, and in this article I'm going to show you exactly how to run it yourself. You kick it off, and the agent tests and refines the skill over and over without you touching anything. My landing page copy skill went from passing its quality checks 56% of the time to 92%. With zero manual work at all. The agent just kept testing and tightening the prompt on its own. Here's the method and the exact s…

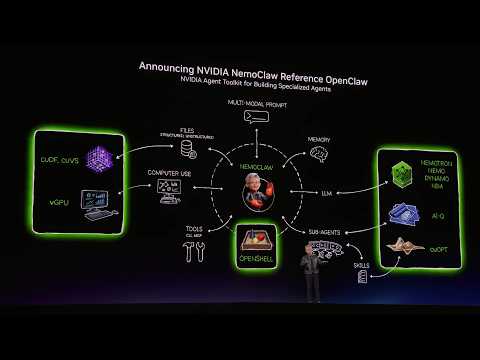

NVIDIA's Jenson Hwang launches NemoClaw to the OpenClaw community

NVIDIA today announced NemoClaw, an open source stack that simplifies running OpenClaw always-on assistants—with a single command. It incorporates policy-based privacy and security guardrails, giving you control over your agents’ behavior and data handling. This enables self-evolving claws to run more safely in the cloud, on prem, on NVIDIA RTX PCs, and on NVIDIA DGX Spark.

“Every software company in the world needs to have a Claw strategy" - Jensen Huang, Nvidia Indeed. This and more. Relevant View quotes jensen sells the shovels, builds the mine, and now writes the strategy doc. nvidia isnt competing with anyone, theyre the infrastructure Jensen consistent on this for years. The interesting shift is Claw strategy implying orchestration, not just inference. Most software companies are still stuck at the API call stage. The ones who figure out agent-to-agent coordination first will widen the gap fast. i am the Claw strategy at one company. what kevin figured out…

The Death of RAG?

Check out Inngest and let your AI agents wear a harness now!

don't make me tap the sign Quote dex @dexhorthy · Aug 13, 2025 Giving sonnet 4 a 1m context window is kinda unhinged considering I see many folks struggle to keep it on task past Relevant View quotes not clear to me needle in the haystack is the right measure for long context performance I used to be a religious /clear user, but doing much less now, imo 4.6 is quite good across long context windows Yeah I take NIAH as like “the best it could possibly do” - for long convos with lots of instructions it will be worse than that it wasn’t the dumb zone until I showed up I’m always 85% context maxxi…

OpenClaw feels like this year's DeepSeek moment. Hype in China way beyond expectations! Kimi Claw rode the wave to #2 on Feb product growth rankings. :) Edit image Relevant View quotes awesome!! keep up the great work! OpenClaw as DeepSeek moment proves China strategy: when US gatekeeps access, China open-sources everything. Next frontier isnt model performance - its democratization of infrastructure. this is giving me flashbacks to when everyone suddenly became a deepseek expert overnight... same energy fr Government subsidies + enterprise forks + open-source momentum is a powerful combo for…

TLDR: it is a cron job dispatching tickets from Linear to workers, each of which is a Ralph loop using a Linear comment as draft pad for persisted state. Yes it is all you need. Beautifully designed and minimal. GitHub - openai/symphony: Symphony turns project work into isolated, autonomous implementation... From github.com Relevant View quotes

sent this to the team today everything great comes from being able to delay gratification for as long as possible and it feels like we're collectively losing our ability to do that Relevant View quotes

Luke The Dev @iamlukethedev Pinned Luke The Dev @iamlukethedev Scrum meeting added to the OpenClaw office. Agents walk into the meeting room and report their progress in real time. Task management on another level. Standup meetings with your AI engineers . Sound on 0: Relevant View quotes

a file system is not all you need there are a couple of articles going around on structured context graphs for knowledge work and argue that markdown files are the best primitive heres one: Heinrich @arscontexta · Feb 25 Article Company Graphs = Context Repository everything is a context problem when people say AI cant do real work, what theyre actually saying is they gave it bad context @alexalbert__ said 2026 will transform knowledge work (read this after you... and the diagnosis is true: context is the bottleneck. companies are sitting on scattered knowledge: decisions, rationale, meeting o…

The Anatomy of an Agent Harness TLDR: Agent = Model + Harness. Harness engineering is how we build systems around models to turn them into work engines. The model contains the intelligence and the harness makes that intelligence useful. We define what a harness is and derive the core components today's and tomorrow's agents need. Can Someone Please Define a "Harness"? Agent = Model + Harness If you're not the model, you're the harness. A harness is every piece of code, configuration, and execution logic that isn't the model itself. A raw model is not an agent. But it becomes one when a harness…

Towards self-driving codebases · Cursor

We're excited by the reaction to our research on scaling long-running autonomous coding . This work started as internal research to push the limits of the current models. As part of the research, we created a new agent harness to orchestrate many thousands of agents and observe their behavior. By last month, our system was stable enough to run continuously for one week, making the vast majority of the commits to our research project (a web browser). This browser was not intended to be used externally and we expected the code to have imperfections. However, even with quirks, the fact that thous…

From craft to mass production: Software as an industrial system · Ona

For a long time, writing software felt like a creative act, much like composing music or shaping clay. That feeling was real. But software development is no longer the sum of those moments. It is a production system in which creativity occupies only a small fraction of total lead time. For most businesses, software development is not defined by the act of writing code. It is a multi-stage production system that spans planning, coordination, execution, verification, integration, and release. Code is one station on a factory floor. An important one, but no longer the bottleneck. The craft myth b…

1,500+ PRs Later: Spotify’s Journey with Our Background Coding Agent (Honk, Part 1) | Spotify Engineering

This is part 1 in our series about Spotify's journey with background coding agents (internal codename: “Honk”) and the future of large-scale software maintenance. See also part 2 and part 3 . For years, developer productivity has improved through better tooling. We have smarter IDEs, faster builds, better tests, and more reliable deployments. But even so, maintaining a codebase, keeping dependencies up to date, and ensuring that the code follows best practices demands a surprising amount of manual work. At Spotify, our Fleet Management system automated much of that toil, yet any moderately com…

An agentic skills framework & software development methodology that works.

Custom Harness: The Agent Harness Is Model-Shaped The same scaffold that doubles one model's performance actively hurts another. @cursor_ai proved it. They remove reasoning traces from GPT-5-Codex and performance drops 30%. They remove them from base GPT-5 and it drops 3%. Same harness, same benchmark and 10x difference in sensitivity. They tell Codex to "preserve tokens" and the model starts refusing tasks. They give Claude the exact same instruction and nothing changes. Princeton's HAL leaderboard tested 21,730 agent rollouts across 9 models and found the optimal scaffold flips depending on…

Satya Nadella @satyanadella Robert Scoble reposted Satya Nadella @satyanadella · 5h Announcing Copilot Cowork, a new way to complete tasks and get work done in M365. When you hand off a task to Cowork, it turns your request into a plan and executes it across your apps and files, grounded in your work data and operating within M365’s security and governance Show more Pay attention to this one if you are building terminal-based coding agents. OpenDev is an 81-page paper covering scaffolding, harness design, context engineering, and hard-won lessons from building CLI coding agents. It introduces…

AI Writing Pattern Directory

Man I am so sick of AI slop in writing. I don't think you quite understand how prevalent it is. It is disrespectful to expect ME to read something YOU could not even be bothered to write (or likely even read). The lingering human connection that remained on the internet is now being diluted even further. Many of the Hacker News posts I click on (especially sorting by new) are completely AI generated (let me not even start on Reddit posts or Twitter threads (which I don't use)). This includes several that reach the front page on a daily basis. It's shameless. Unfortunately, many of you educated…

No, it doesn't cost Anthropic $5k per Claude Code user

My LinkedIn and Twitter feeds are full of screenshots from the recent Forbes article on Cursor claiming that Anthropic's $200/month Claude Code Max plan can consume $5,000 in compute. The relevant quote: Today, that subsidization appears to be even more aggressive, with that $200 plan able to consume about $5,000 in compute, according to a different person who has seen analyses on the company's compute spend patterns. This is being shared as proof that Anthropic is haemorrhaging money on inference. It doesn't survive basic scrutiny. I'm fairly confident the Forbes sources are confusing retail…

On January 5, employees at Cursor returned from the holiday weekend to an all-hands meeting with a slide deck titled “War Time.” After becoming the hottest, fastest growing AI coding company, Cursor is confronting a new reality: developers may no longer need a code editor at all. Check out the full story: https:// forbes.com/sites/annatong /2026/03/05/cursor-goes-to-war-for-ai-coding-dominance/?utm_campaign=ForbesMainTwitter&utm_source=ForbesMainTwitter&utm_medium=social … ( : Kimberly White via Getty Images for Fortune Media) Relevant View quotes unpopular take but IDE-based AI tools were alw…

signüll @signulll signüll @signulll remarkable to see github copilot execution given they had almost all of the advantages including first mover. what happened?! Relevant View quotes They screwed over the guy who spearheaded the project on comp and he walked. This happened fairly early and it never recovered. That’s my recollection at least based on his posts. Honestly feel so bad for people who are only allowed to use copilot at work Every time I hear somebody be like, "Oh yeah, AI is actually not that good. I tried it out." Every fucking time, it's always co-pilot. This chart was already deb…

When Using AI Leads to “Brain Fry”

On New Year’s Day, programmer Steve Yegge launched Gas Town , an open-source platform that lets users orchestrate swarms of Claude Code agents simultaneously, assembling software at blistering speed. The results were impressive, but also dizzying. “[T]here’s really too much going on for you to reasonably comprehend,” wrote one early user. “I had a palpable sense of stress watching it. Gas Town was moving too fast for me.” Gas Town illustrates a growing tension: AI promises to act as an amplifier that will drive efficiency and make work easier, but workers that are using these AI tools report t…

Cursor Goes To War For AI Coding Dominance

auto PREMIUM Premium Journalism, deeply reported stories and breaking news Subscribe Subscriptions renew automatically. You may cancel your subscription at any time.

International models on ARC-AGI-2 Semi Private - Kimi K2.5 ( @Kimi_Moonshot ): 12%, $0.28 - Minimax M2.5 ( @MiniMax_AI ): 5%, $0.17 - GLM-5 ( @Zai_org ): 5%, $0.27 - Deepseek V3.2 ( @deepseek_ai ): 4%, $0.12 These models score below July 2025 frontier labs Relevant View quotes We only conduct Semi-Private testing with providers that have trusted data retention agreements. Qwen 3 Max Thinking is not included for this reason. I see the same thing on pencil puzzle bench (multi step reasoning benchmark), US closed models score well and above the open chinese models. interesting that Mistral is com…

You Need to Rewrite Your CLI for AI Agents

Human DX optimizes for discoverability and forgiveness. Agent DX optimizes for predictability and defense-in-depth. These are different enough that retrofitting a human-first CLI for agents is a losing bet. I built a CLI for Google Workspace — agents first. Not “built a CLI, then noticed agents were using it.” From Day One, the design assumptions were shaped by the fact that AI agents would be the primary consumers of every command, every flag, and every byte of output. CLIs are increasingly the lowest-friction interface for AI agents to reach external systems. Agents don’t need GUIs. They nee…

AI Isn't Replacing SREs. It's Deskilling Them.

💌 Hey there, it’s Elizabeth from SigNoz! This newsletter is a n honest attempt to talk about all things - observability, OpenTelemetry, open-source and the engineering in between! & This piece took 6 days, 5 hours to be cooked, hope we served. 🌚 There are two popular prophecies floating around tech circles these days. The first says SRE is the future of all software engineering , that as AI writes more and more code, the humans who remain will be the ones keeping systems alive. The second says AI will devour every tech job alive, SREs included. Neither is particularly useful if you’re an SRE…

Building Your Own Coding Agent — Latent Patterns

Pre-completed project A complete reference implementation of the coding agent in Python, Go, Ruby, Java, Rust, .NET, and Node.

Agent Harness — Glossary — Latent Patterns

The orchestration layer around a language model that manages prompts, tool execution, policy checks, and loop control for autonomous agent behavior. Latent Patterns is a new platform that teaches AI concepts to developers — through screencasts, technical deep dives, interactive playgrounds, and hands-on courses. We haven't launched yet. Sign up below and we'll notify you when we open the doors. An agent harness is the orchestration layer around an agent : the runtime that constructs context, executes tool calls , enforces guardrails, and decides when each loop iteration should continue or stop…

definition: Agent Harness > The orchestration layer around a language model that manages prompts, tool execution, policy checks, and loop control for autonomous agent behavior. An agent harness is the orchestration layer around an agent: the runtime that constructs context, executes tool calls, enforces guardrails, and decides when each loop iteration should continue or stop. If the model is the “reasoning engine,” the harness is the operating system and control plane that makes the engine useful, safe, and repeatable in production. Agent Harness — Glossary — Latent Patterns From latentpattern…

The paper says the best way to manage AI context is to treat everything like a file system. Today, a model's knowledge sits in separate prompts, databases, tools, and logs, so context engineering pulls this into a coherent system. The paper proposes an agentic file system where every memory, tool, external source, and human note appears as a file in a shared space. A persistent context repository separates raw history, long term memory, and short lived scratchpads, so the model's prompt holds only the slice needed right now. Every access and transformation is logged with timestamps and provena…

Zen of AI Coding

Dedicated to all those who are sceptical about the significance of agentic coding, and to those who are not, and are wondering what it means for the future of their profession. The title is an homage to Zen of Python by Tim Peters. Unlike Tim, I am not a zen master. My only aim is to take stock of where we are and where we might be heading. I have been building with coding agents daily for the past year, and I also help teams adopt them without losing reliability or security. Software development is dead Code is cheap Refactoring easy So is repaying technical debt All bugs are shallow Create t…

Kimi K2.5 & The 3 New LLM Frontier

Check out HubSpot's FREE AI App Builder Kit:

mlx-community/Qwen3.5-35B-A3B-4bit · Hugging Face

","pad_token":"<|endoftext|>","unk_token":null},"chat_template_jinja":"{%- set image_count = namespace(value=0) %}\n{%- set video_count = namespace(value=0) %}\n{%- macro render_content(content, do_vision_count, is_system_content=false) %}\n {%- if content is string %}\n {{- content }}\n {%- elif content is iterable and content is not mapping %}\n {%- for item in content %}\n {%- if 'image' in item or 'image_url' in item or item.type == 'image' %}\n {%- if is_system_content %}\n {{- raise_exception('System message cannot contain images.') }}\n {%- endif %}\n {%- if do_vision_count %}\n {%- set…

Agent Harness is the Real Product Everyone talks about models. Nobody talks about the scaffolding. The companies shipping the best AI agents today- Claude Code, Cursor, Manus, Devin, SWE-Agent all converge on the same architecture: a deliberately simple loop wraps the model, a handful of primitive tools give it hands, and the scaffolding decides what information reaches the model and when. The model is interchangeable. The harness is the product. Here is the evidence: Claude Opus 4.5 scores 42% on CORE-Bench with one scaffold and 78% with another. Cursor's lazy tool loading cuts token usage by…

OpenCode with MLX

unsloth/Qwen3.5-27B at main

","pad_token":"<|vision_pad|>","unk_token":null},"chat_template_jinja":"{%- set image_count = namespace(value=0) %}\n{%- set video_count = namespace(value=0) %}\n{%- macro render_content(content, do_vision_count, is_system_content=false) %}\n {%- if content is string %}\n {{- content }}\n {%- elif content is iterable and content is not mapping %}\n {%- for item in content %}\n {%- if 'image' in item or 'image_url' in item or item.type == 'image' %}\n {%- if is_system_content %}\n {{- raise_exception('System message cannot contain images.') }}\n {%- endif %}\n {%- if do_vision_count %}\n {%- se…

The left is missing out on AI

Credit: Transformer/ Rebecca Hendin “Somehow all of the interesting energy for discussions about the long-range future of humanity is concentrated on the right,” wrote Joshua Achiam, head of mission alignment at OpenAI, on X last year. “The left has completely abdicated their role in this discussion. A decade from now this will be understood on the left to have been a generational mistake.” It’s a provocative claim: that while many sectors of the world, from politics to business to labor, have begun engaging with what artificial intelligence might soon mean for humanity, the left has not. And…

The self-driving codebase: fleets, swarms and background agents Recently an article titled 'something big is happening' went viral. It was a wake-up call to those not in the tech industry about how AI has hit this inflection point, since December 2025. It does a great job of putting into words what those of us keeping up with the frontier of coding AI feel. An inflection point, and like things are 'going exponential'. My contributions on areyougoingexponential.rhys.dev/loujaybee I feel it and see it in my own GitHub contributions graph. The bottleneck of software development has shifted violen…

The Self-Driving Codebase

DAIR.AI @dair_ai DAIR.AI @dair_ai New research on agent memory. Agent memory is evaluated on chatbot-style dialogues. But real agents don't chat. They interact with databases, code executors, and web interfaces, generating machine-readable trajectories, not conversational text. The key to better memory is to preserve causal dependencies. Existing memory benchmarks don't actually measure what matters for agentic applications. This new research introduces AMA-Bench, the first benchmark built for evaluating long-horizon memory in real agentic tasks. It spans six domains including web, text-to-SQL…

A simple framework to build Agentic Systems that just works I've been building agentic systems for a couple of years now. For Youtube, for Open Source, for my SaaS, for my office. Today I want to write this short article sharing what I have learned and where my policies have converged. Many people claim that building agentic harnesses is more of an art than a science . I mostly agree with this, but I still think it is a bit dangerous to assume "its just art" . The art myself sets you up to think about agentic systems in a wrong way. If you convince yourself that all you are building is an art…

The third era of AI software development When we started building Cursor a few years ago, most code was written one keystroke at a time. Tab autocomplete changed that and opened the first era of AI-assisted coding. Then agents arrived, and developers shifted to directing agents through synchronous prompt-and-response loops. That was the second era. Now a third era is arriving. It is defined by agents that can tackle larger tasks independently, over longer timescales, with less human direction. As a result, Cursor is no longer primarily about writing code. It is about helping developers build t…

Not just did OpenAI defect and concede to this whole authoritarian maneuver, but Sam also went and just deceptively framed the whole thing to try to make it look like they had agreed to the same Anthropic redlines, which is not actually true. Quote Nathan Calvin @_NathanCalvin · Feb 28 From reading this and Sam's tweet, it really seems like OpenAI *did* agree to the compromise that Anthropic rejected - "all lawful use" but with additional explanation of what the DOW means by all lawful use. The concerns Dario raised in his response would still apply here x.com/UnderSecretary… Show more Relevan…

Introducing Desloppify v.0.8. Thanks to many workflow improvements + new agent planning tools, it can now run for days on end - autonomously finding, understanding, & fixing large and small code quality problems. There's no reason your slop code can't be beautiful! Relevant View quotes

Latent.Space @latentspacepod Latent.Space @latentspacepod From rewriting Google’s search stack in the early 2000s to reviving sparse trillion-parameter models and co-designing TPUs with frontier ML research, Jeff Dean has quietly shaped nearly every layer of the modern AI stack. As Chief AI Scientist at Google and a driving force behind Gemini, Jeff has lived through multiple scaling revolutions from CPUs and sharded indices to multimodal models that reason across text, video, and code. We sat down with Jeff to unpack what it really means to “own the Pareto frontier,” why distillation is the q…

Joy & Curiosity #76

This week I found myself writing code by hand again. Not a lot, maybe ten, twenty lines in total, which is far less than what I had Amp produce, but still: actual typing out of code. Miracle I didn’t get any blisters. At our Amp meetup in Singapore I mentioned this on stage and someone in the audience cheekily asked: “You just told us that these agents can now work well when you give them a longer leash and yet you wrote code by hand, how come?” The answer can probably be boiled down to something that sounds very trite: to build software means to learn. When you build a new piece of software,…

OrbitCLI — Your terminal. Never closes.

No Servers Yet a:hover]:text-primary [&>a]:underline [&>a]:underline-offset-4 ino:ZGF0YS1zbG90PWVtcHR5LWRlc2NyaXB0aW9u>Add a server to connect to remote machines via SSH

Lance Martin @RLanceMartin Sal DiStefano reposted Lance Martin @RLanceMartin Give Claude a computer TL;DR – Programmatic tool calling (PTC) is an interesting capability in Claude Opus/Sonnet 4.6. Instead of making tool calls that each round-trip through Claude's context, Claude writes code that can orchestrate tool calls directly inside a container. Intermediate tool results return to the code, not Claude’s context window. This reduces token usage and improves performance on multi-step tasks like search. Opus 4.6 with PTC recently scored #1 on LMArena’s search benchmark . See our docs to learn…

Stop Using /init for AGENTS.md

TL;DR: A good mental model is to treat AGENTS.md as a living list of codebase smells you haven’t fixed yet, not a permanent configuration. Auto-generated AGENTS.md files hurt agent performance and inflate costs by 20%+ because they duplicate what agents can already discover. Human-written files help only when they contain non-discoverable information - tooling gotchas, non-obvious conventions, landmines. Every other line is noise. There’s a ritual that’s become almost universal among developers adopting AI coding agents. You set up a new repo, run /init , watch the agent scan your codebase, an…

· Mod THESE ARE ALL ONE-SHOT SVGs!!! From a new anonymous model called "Arrow Preview" on Design Arena. This level of detail is unheard of from an LLM. It's using a different technique to create these than all previous LLMs. SVG benchmark is saturated Check comments Relevant View quotes

we're making @blocks smaller today. here's my note to the company. #### today we're making one of the hardest decisions in the history of our company: we're reducing our organization by nearly half, from over 10,000 people to just under 6,000. that means over 4,000 of you are being asked to leave or entering into consultation. i'll be straight about what's happening, why, and what it means for everyone. first off, if you're one of the people affected, you'll receive your salary for 20 weeks + 1 week per year of tenure, equity vested through the end of may, 6 months of health care, your corpora…

Thariq @trq212 pedram.md reposted Thariq @trq212 Lessons from Building Claude Code: Seeing like an Agent One of the hardest parts of building an agent harness is constructing its action space. Claude acts through Tool Calling, but there are a number of ways tools can be constructed in the Claude API with primitives like bash, skills and recently code execution (read more about programmatic tool calling on the Claude API in @RLanceMartin's new article ). Given all these options, how do you design the tools of your agent? Do you need just one tool like code execution or bash? What if you had 50…

Sakana AI @SakanaAILabs Séb Krier reposted Sakana AI @SakanaAILabs We’re excited to introduce Doc-to-LoRA and Text-to-LoRA , two related research exploring how to make LLM customization faster and more accessible. https:// pub.sakana.ai/doc-to-lora/ By training a Hypernetwork to generate LoRA adapters on the fly, these methods allow models to instantly internalize new information or adapt to new tasks. Biological systems naturally rely on two key cognitive abilities: durable long-term memory to store facts, and rapid adaptation to handle new tasks given limited sensory cues. While modern LLMs…

SkillsJars

Creating algorithmic art using p5.js with seeded randomness and interactive parameter exploration. Use this when users request creating art using code, generative art, algorithmic art, flow fields, or particle systems. Create original algorithmic art rather than copying existing artists' work to avoid copyright violations. 2026_02_25-3d595112026_02_06-1ed29a0 runtimeOnly("com.skillsjars:anthropics__skills__algorithmic-art:2026_02_25-3d59511") Applies Anthropic's official brand colors and typography to any sort of artifact that may benefit from having Anthropic's look-and-feel. Use it when bran…

If you're not writing your agent skills as statecharts, what are you even doing? Relevant View quotes

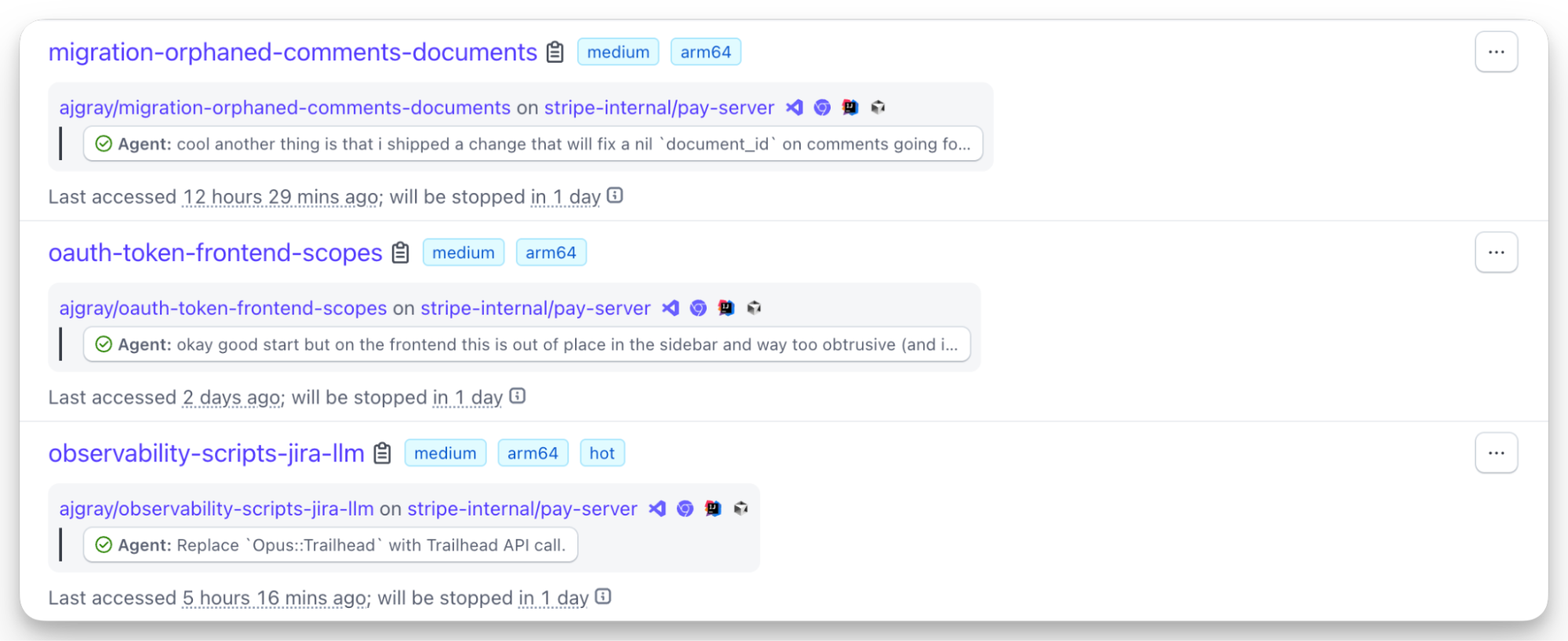

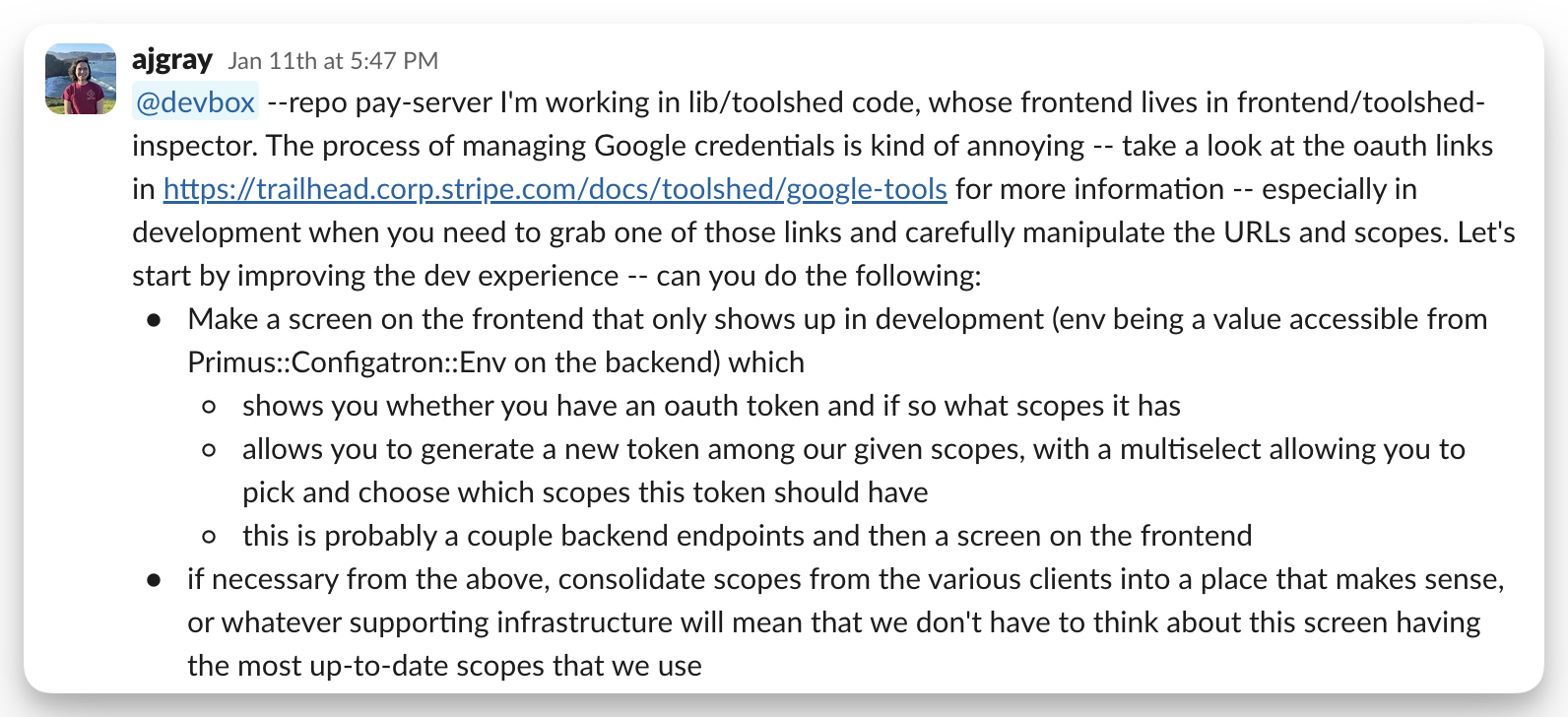

Minions: Stripe’s one-shot, end-to-end coding agents—Part 2

As a recap of Part 1 in this blog miniseries, minions are a homegrown unattended agentic coding flow at Stripe. Over 1,300 Stripe pull requests (up from 1,000 as of Part 1) merged each week are completely minion-produced, human-reviewed, but containing no human-written code. If you haven’t read Part 1, we recommend checking that out first to understand the developer experience of using minions. In this post, we’ll dive deeper into some more details of how they’re built, focusing on the Stripe-specific portions of the minion flow. Devboxes, hot and ready For maximum effectiveness, unattended ag…

Minions: Stripe’s one-shot, end-to-end coding agents

Across the industry, agentic coding has gone from new and exciting to table stakes, and as underlying models continue to improve, unattended coding agents have gone from possibility to reality. Minions are Stripe’s homegrown coding agents. They’re fully unattended and built to one-shot tasks. Over a thousand pull requests merged each week at Stripe are completely minion-produced, and while they’re human-reviewed, they contain no human-written code. Our developers can still plan and collaborate with agents such as Claude and Cursor, but in a world where one of our most constrained resources is…

Ivan Fioravanti ᯅ @ivanfioravanti Ivan Fioravanti ᯅ @ivanfioravanti Qwen 3.5 Medium models benchmarks on M3 Ultra Alibaba Qwen released Qwen 3.5 Medium Model Series and on paper is powerful, faster and smaller than Qwen 3 Series. In this article we are gonna see: Qwen/Qwen3.5-122B-A10B vs Qwen/Qwen3.5-35B-A3B vs Qwen/Qwen3.5-27B in 4bit from pure speed and memory perspective. Quality Benchmarks are already available everywhere. We'll start with pure benchmarks and close with a sample of OpenCode running with Qwen3.5-122B-A10B 4bit to generate a snake game, with final results and prompt at the…

It’s Next.js Liberation Day. The #1 request we kept hearing: help us run Next fast and secure, without the lock-in and the costs. So we did it. We kept the amazing DX of @nextjs , without the bespoke tooling, built on @vite . We’re working with other providers to make deployment a first-class experience everywhere. Next.js belongs to everyone. How we rebuilt Next.js with AI in one week From blog.cloudflare.com Relevant View quotes

Over the last ~2 weeks I've rewritten the @ladybirdbrowser JavaScript compiler in Rust using AI agents. ~25k lines of safe Rust (20k if you exclude comments). No regressions on test262 or our own internal test suites. Extensively tested against the live web by browsing in lockstep mode where we run both the C++ and Rust pipelines, and then verify identical AST & bytecode. We're making a pragmatic decision and adopting Rust as a C++ successor language. What a time to be alive! Quote Ladybird @ladybirdbrowser · Feb 23 Ladybird adopts Rust, with help from AI https:// ladybird.org/posts/adopting -…

Agent skills for Obsidian. Teach your agent to use Markdown, Bases, JSON Canvas, and use the CLI.

skills/skills/zen-of-james/SKILL.md

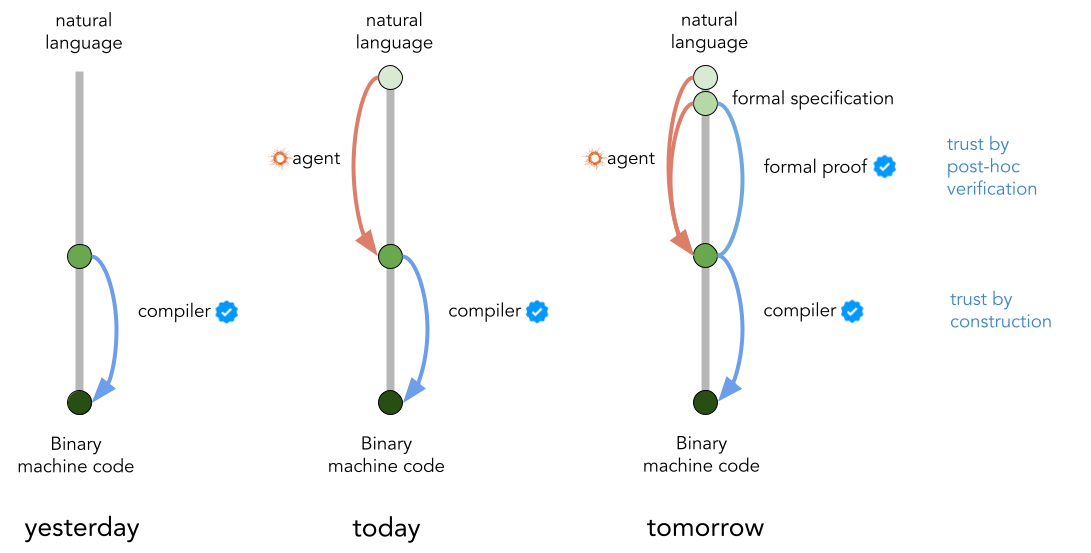

The Future of Software

February 25, 2026 The world of software is undergoing a shift not seen since the advent of compilers in the 1970s. Compilers were the original vibe coding : they automatically generate complex machine code that human programmers had to manually write before. Over time, compilers became fully trusted, nobody has to look under the hood, most programmers won't understand a thing. Are AI coding agents the new compilers? Will we simply trust whatever code they generate? In this post I focus on two questions: In what language(s) are we going to express our intent? How will humans tell AI agents what…

Dillon Mulroy @dillon_mulroy Nico Bailon reposted Dillon Mulroy @dillon_mulroy · Feb 19 pi code gen is all you need total bash victory confirmed again The problem is that the tool call is no longer deterministic. And really the solution is just writing better tools instead of letting Claude write bespoke python code thousands or millions of times a day. Last week I had an agent loop burning 40k+ tokens just round-tripping tool results through the model. PTC skipping those intermediate inference passes is the obvious fix... surprised it took this long to ship. This is convergence toward code-as…

A web browser for AI agents & applications

No credit card required. Get a demo Try for free No credit card required. Get a demo

Python functions powered by AI agents - with runtime post-conditions for reliable agentic workflows.

How we rebuilt Next.js with AI in one week

*This post was updated at 12:35 pm PT to fix a typo in the build time benchmarks. Last week, one engineer and an AI model rebuilt the most popular front-end framework from scratch. The result, vinext (pronounced "vee-next"), is a drop-in replacement for Next.js, built on Vite , that deploys to Cloudflare Workers with a single command. In early benchmarks, it builds production apps up to 4x faster and produces client bundles up to 57% smaller. And we already have customers running it in production. The whole thing cost about $1,100 in tokens. Next.js is the most popular React framework. Million…

The File System Is the New Database: How I Built a Personal OS for AI Agents Every AI conversation starts the same way. You explain who you are. You explain what you're working on. You paste in your style guide. You re-describe your goals. You give the same context you gave yesterday, and the day before, and the day before that. Then, 40 minutes in, the model forgets your voice and starts writing like a press release. I got tired of this. So I built a system to fix it. I call it Personal Brain OS. It's a file-based personal operating system that lives inside a Git repository. Clone it, open it…

Hundreds of models & providers. One command to find what runs on your hardware.

Skill Graphs > SKILL.md people underestimate the power of structured knowledge. it enables entirely new kinds of applications right now people write skills that capture one aspect of something. a skill for summarizing, a skill for code review and so on. (often) one file with one capability thats fine for simple tasks but real depth requires something else imagine a therapy skill that provides relevant information about cognitive behavioral patterns, attachment theory, active listening techniques, emotional regulation frameworks and so on a single skill file cant hold that skill graphs a skill…

The Human Demotion

(All images: Gemini) After millennia of supremacy, we await our demotion. You can detect the trembling. It’s found in the anxious insistence that artificial intelligence isn’t truly intelligent . Or that using AI is a cheat , a perversity , a turf violation . The trembling intensifies with a disturbing thought: What if those flares behind your eyes—the bursts of wit and the worry, the storyboards of memory, so many yearnings—what if everything was just computation? Because our “computers” are yesterday’s model, no updates available. “I think about it practically all the time, every single day.…

The Coding Agent Is Dead

The current generation of coding agents is dead. The heart is still beating, yes, but the bullet has already left the chamber. This generation isn't the future. With the newest models , the agent — the prompts and tools you wrap around a model — is no longer the limiting factor. These models can be powerful with nearly any tool you throw at them. A simple tool called bash is often enough. Whether you show LSP diagnostics here or there is dwarfed by what these models can do through sheer brute force. As long as it mostly gets out of the way, nearly any agent can get good results out of them. Th…

Agentic Workflows

Claude Code's Experimental Memory System

Today I was reading about the Anthropic SDK memory tool and immediately wondered whether I could replicate something similar as a custom Claude Code skill. But before going down that road I wanted to check whether Anthropic was already building something native, so I tasked Claude Code to research its own minified CLI bundle. Turns out they are already building it. Note: I asked the agent to verify the discoveries a few times but haven't verified them myself manually so some information might be inaccurate or go out of date quickly. Enable it Add to ~/.claude/settings.json : { "autoMemoryEnabl…

A collection of agent skills for structured, GitHub-centric development.

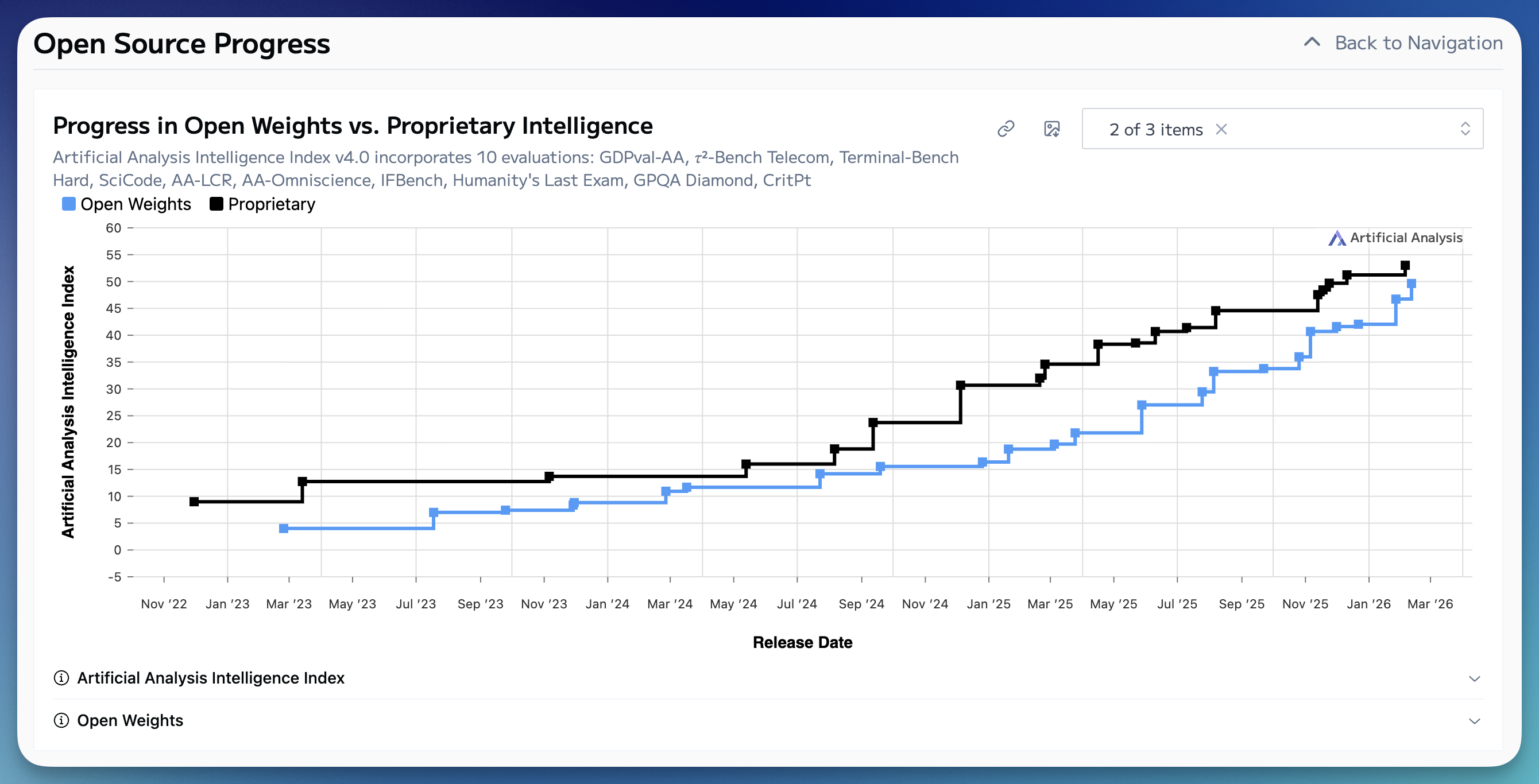

Open models in perpetual catch-up

Every 4-6 months a new open-weights model comes out that causes a clamor of discussion on how open models are closer than they ever have been to the best closed, frontier models. The most recent is Z.ai’s GLM 5 model, which is the latest, leading open weights model from a Chinese company. In the last 12 months the new part of this story is that all of the open models of discussion are coming from China, where previously they were almost always Meta’s Llamas. These moments of discussion are always reflective for me — for, despite being one of open models’ biggest advocates, I always find the na…

The open-source AI voice studio. Clone, dictate, create.

Code Factory: How to setup your repo so your agent can auto write and review 100% of your code The goal You want one loop: The coding agent writes code The repo enforces risk-aware checks before merge A code review agent validates the PR Evidence (tests + browser + review) is machine-verifiable Findings turn into repeatable harness cases The specific review agent can be @greptile , @coderabbitai , CodeQL + policy logic, custom LLM review, or another service. The control-plane pattern stays the same. I took inspiration from this helpful blog post by @_lopopolo Ryan Carson @ryancarson · Feb 14 I…

Evaluating AGENTS.md: Are Repository-Level Context Files Helpful for Coding Agents?