My 26-Q1 Agentic Coding Workflow

Some notes on building stuff using agents

Cover: The gang and I building an overengineered CLI.

I’ve been doing more and more agentic-coding for the past year. And some patterns keep showing up. Not rules, just things that seem to work for me across the board.

Closing the Loop

The agent needs to verify what it built. Not just write code and hope. But actually check if it works. Like a self healing mechanism.

For an app, that could mean:

- Build the code

- Lint/format

- Run tests

- Run the app

- Inspect the UI

- Debug failures

- Check the logs

You need tooling that lets the agent execute and observe. Sometimes that means writing a small script, sometimes it’s just about adding proper instructions to its context. Sometimes it’s a browser automation tool, and sometimes you build a custom skill, like I did with android-use.

Closing the agent loop is something I’m really leaning into now. Basically, an agent needs to be able to: Given a goal, it will derive a plan. Now, it needs to get the world state, then reason on what action to take to achieve that goal, make an action, and VERIFY the state again. Then loop.

Without being able to verify what happened it can easily get lost. It’s not its “fault” if you think about it, you gotta help it out.

We’ve briefly talked about that on our Fragmented podcast last week, skip to 09:03.

Multitasking

Agents take time. While one’s working, you may jump to something else. Maybe a different part of the codebase. Maybe a different project.

I try to keep my parallel work under three different things. Backend change here, frontend tweak there, docs update somewhere else. When your system is modular enough, you can hop between them without much hassle.

It’s oddly addictive. There’s always something you could be doing, but BEWARE. This can fry your brain faster than manual coding+multitasking would.

You Don’t Have to Read All the Code

Well, it depends on what you’re doing. Please don’t do it on your banking app or in any critical system. I’d like my planes flown without vibe coding for now, thank you.

But ON SOME INSTANCES, doing that is fine. Think of your home automation scripts, or some fun side project for tracking your run stats.

The thing is, if you structure things right, you really don’t have to read it all. Focus on the boundaries. Make sure data formats and validation between modules are sound, then test and trust the interior.

Like a staff eng would trust his/hers peers to implement something, you might eventually build enough trust that if your agents have built and tested like you asked them to, things will work. Also, there’s absolutely no reason to avoid writing tests anymore. Use that.

This focus on bondaries idea maps to functional core, imperative shell. Which to me always sounded like a nimbler version of hexagonal arch. Separate calculation from action:

Functional Core: Pure functions, immutable data. Takes input, returns output. No side effects. No touching the outside world.

Imperative Shell: Thin layer that does the I/O. Reads user input, calls the core for logic, writes to database, updates UI.

Side effects live in the shell. Logic lives in the core. Testing becomes straightforward. So does working with agents (and humans).

Semantic Maps

I have a folder I call .ai-artifacts. It sits at the root and at relevant units throughout the project.

A unit is whatever has enough meaning to you. Could be the project root, a package, a module, or a feature folder. Like unit tests, you define what this boundary means. I could probably write a full article on the things I like to keep in here.

Inside each .ai-artifacts folder, the main file is the semantic map. It describes what’s in that unit.

Everything you’d want an agent to know before touching this code.

In my case, they can look like this.

It’s independent from the global AGENTS.md/CLAUDE.md, so I control when they load. When I want deeper context about src/auth/, I run /load-semantic-map to inject that folder’s map plus parent folders up to root.

I also have an agent to create or update a semantic-map. It uses git hash to check if anything changed. Generates new maps or updates existing ones. They’re opt-in. I decide when the context is worth the tokens.

OpenCode is building something similar:

This is pretty cool to see!

— iury souza (@IurySza) January 28, 2026

I was manually doing by combining claude rules and a few commands to generate a "semantic mind map" for each "unit" of the project.

It worked pretty well. Having it baked into the harness is awesome. https://t.co/mWwCddfxq8

They also just added a /learn command to capture session insights back into scoped context files. Which is similar to what I do when I update the semantic-map.

We might be on to something.

Specialized Agents

I’m playing with different agent types for specific areas of work. I don’t use all of them all the time, but these are the categories where I’ve found it useful to spawn a dedicated agent:

- Coder: Main agent. Writes most code, very basic skills/tools, and perhaps an MCP for connecting to the IDE of choice.

- Planner: Read-only mode. Explores codebase, designs approach before implementation.

- Reviewer: Fresh eyes after changes are done. This guy is a PITA.

- Tester: Writes tests for what Coder built. Picky. Looks for edge cases.

- Researcher: Connected to a few MCPs to find info online. Meant for scouring the web, also has a browser-tool.

- Scribe: Maintains architecture docs and semantic maps.

Instead of having every skill and MCP globally available, each agent gets equipped with just the subset it needs. Minimal tooling per agent. Focused scope, fewer ways to go wrong. I manually spawn these. No automatic orchestration, I decide when I need a different perspective.

The agentic trap

Don’t overdo it. You don’t need an army of subagents. If you’re not at the frontier of this, I recommend avoiding getting sucked into this rabbit hole.

Im leaning towards this opinion for some time.

— iury souza (@IurySza) February 2, 2026

Occam's razor, man... https://t.co/4kqObYCTFk pic.twitter.com/yQrj9XB6wI

Workflow High level View

- Start on

plan-mode: describe what you want in plain English using a lot of context and as much detail as you can.- protips:

- Build your own.

- Use dictation tools for this talking to the model here. There are tons of options, I’ve settled with VoiceInk some time ago.

- protips:

- Iterate: Iterate on that plan as much as needed. Get an output file. Open it. Read through.

/tracker- Use a command/skill/prompt to break down the work into phases.- Code: Code it however you prefer. Serial or parallel (don’t overdo it), human in the loop or not.

- You can have one instance coding, another reviewing, another writing tests. Or do one thing at a time.

- protip: You don’t need the SOTA model to do all of the work (unless someone else is paying for it.). Find a balance of what tasks can be offloaded to faster/cheaper agents. I’m in a

kimi2.5-coding+GPT-5.2-Codexwithopencodephase now (jan 26).

- Manage the context: Know when to clear the context and start over. above 100k context is iffy territory.

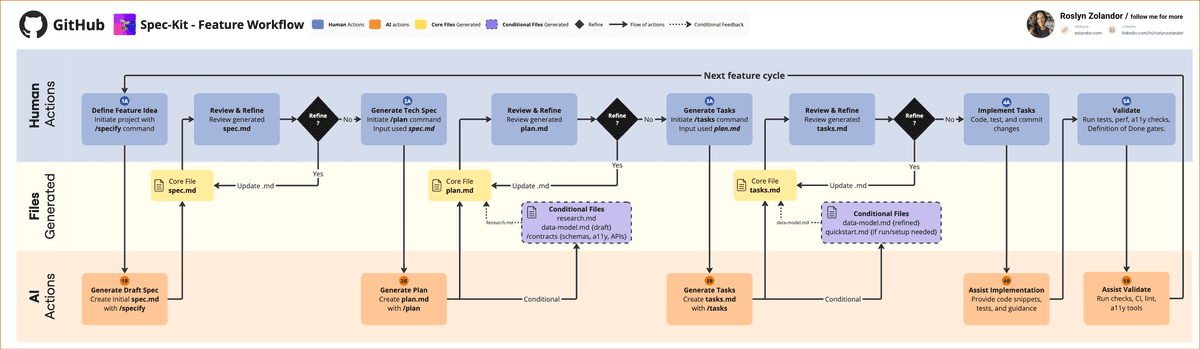

The other day I found out there’s a framework that kinda emcompasses a lot of this. They call it spec-kit. I’m not a fan of such frameworks yet because I don’t like losing control of how this works, but I like it when they show that my experience is somewhat aligned with them.

Confirmation bias, am I right?

In any case, the concept is very simple and powerful: You just gotta build good enough context (md files) for the agent to keep working.

Spec-Kit workflow

Hope this is useful!

PS: This will likely change before next month :D